Kentik Blog

Today almost every type of organization must be prepared to mitigate DDoS attacks and proactively defend their networks. In this post, we discuss our newly added BGP Flowspec support in Kentik Detect. This enables our customers to have the granularity needed to detect attack traffic in real time and mitigate attacks faster.

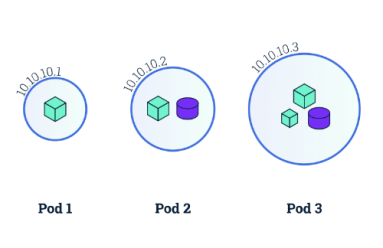

In this blog post, we provide a starting point for understanding the networking model behind Kubernetes and how to make Kubernetes networking simpler and more efficient.

Broadband providers must manage high infrastructure costs on a price-per-bit basis. Visibility into their network performance and security is critical. In this post, we dig into how that led Viasat, a global provider of high-speed satellite broadband services and secure networking systems, to tap Kentik for help.

Building a cloud application is like building a house. If you don’t at least acknowledge the industry’s best practices, it may all come tumbling down. Here we look at AWS’ Well-Architected Framework as a good starting point for building effective cloud applications and outline why having the right tools in place can make all the difference.

If you are a service provider or enterprise providing your customers with traffic visibility into their networks, there are things you must consider. In this post, we will look into design considerations for self-service network analytics portals.

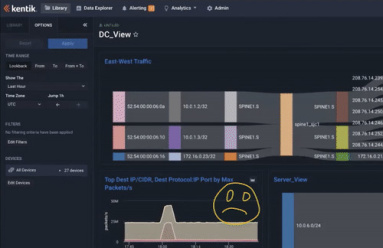

For NetOps and SecOps teams, alarms typically mean many more hours of work ahead. That doesn’t have to be the case. In this post, we look at how to troubleshoot an abnormal traffic spike and get to root cause in under five minutes.

“Wow!” moments don’t happen randomly. At Kentik, they reflect product value, differentiators, and the hard work of our engineering team. In this post, we look at a few of those moments when the insight delivered by Kentik made customers say, “Wow, that was amazing!”

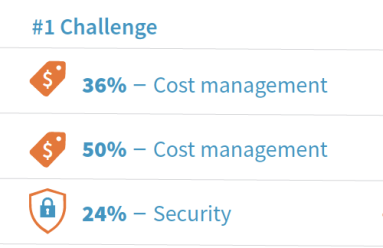

Today we released a new report: “AWS Cloud Adoption, Visibility & Management.” The report compiles an analysis based on a survey of 310 executive and technical-level attendees at the recent AWS user conference. Simply put, we found: It’s a multi-cloud, cost-containment world.

In a new report, 2019 Trends in Cloud Transformation, 451 Research analysts dig into seven trends happening amidst the move to the cloud, as well as recommendations, and clear winners and losers for each of the trends. In this post, we provide an overview and a licensed copy of the report for you.

There are five network-related cloud deployment mistakes that you might not be aware of, but that can negate the cloud benefits you’re hoping to achieve. In this post, we provide an overview of each mistake and a guide for avoiding them all.