Kentik Blog: NetFlow

Flow data has always held immense potential, but was often inaccessible because it lacked context and speed. Kentik removes that friction by automatically enriching flow with human-readable context, making it a daily driver for everyone, not just specialists.

The tools we use shape how we see problems. When flow and metrics are siloed, so is your visibility.

Modern network operators need modern observability tools. In this post, we explore why Deepfield — a traditional network flow analytics platform — falls short in providing comprehensive insights required for today’s network operations, and how Kentik’s modern data platform is purpose-built for today’s infrastructure teams.

Testing NetFlow used to require time, expertise, and lab equipment. Using Kentik and the new Kappa agent, it can be done in minutes with nothing more than a spare Linux machine.

Does flow sampling reduce the accuracy of our visibility data? In this post, learn why flow sampling provides extremely accurate and reliable results while also reducing the overhead required for network visibility and increase our ability to scale our monitoring footprint.

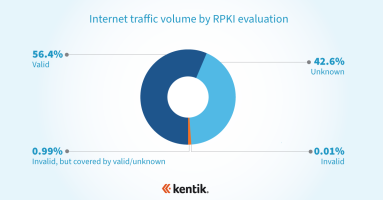

RPKI is the internet’s best defense against BGP hijacks. What is it? And how does it protect the majority of your outbound traffic from accidental BGP hijacks without posing a risk to legitimate traffic?

What is the difference between SNMP and flow technologies? Who uses the data sets? And what benefits do these technologies bring to network professionals? In this post, we explain how and why to use both.

The most commonly used network monitoring tools in enterprises were created specifically to handle only the most basic faults with traditional network devices. CTO Jonah Kowall explains why these tools don’t scale to meet today’s network visibility needs, why more enterprises are moving from faults & packets to flow, and how Kentik can help.

Kentik’s Aaron Kagawa explains why today’s network analytics solutions require new types of contextual data and introduces the concept of Universal Data Records. Learn how and why Kentik is moving beyond network flow data.

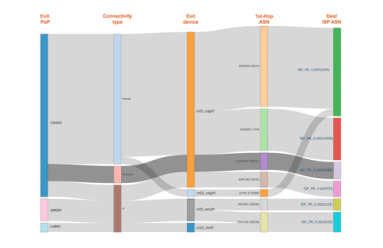

In our latest post on Interface Classification, we look beyond what it is and how it works to why it’s useful, illustrated with a few use cases that demonstrate its practical value. By segmenting traffic based on interface characteristics (Connectivity Type and Network Boundary), you’ll be able to easily see and export valuable intelligence related to the cost and ROI of carrying a given customer’s traffic.

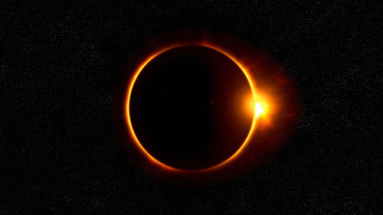

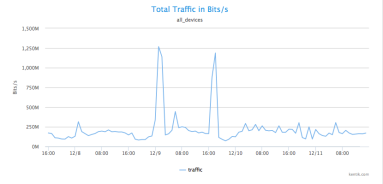

With much of the country looking skyward during the solar eclipse, you might wonder how much of an effect there was on network traffic. Was there a drastic drop as millions of watchers were briefly uncoupled from their screens? Or was that offset by a massive jump in live streaming and photo uploads? In this post we report on what we found using forensic analytics in Kentik Detect to slice traffic based on how and where usage patterns changed during the event.

Kentik addresses the day-to-day challenges of network operations, but our unique big network data platform also generates valuable business insights. A great example of this duality is our new Interface Classification feature, which streamlines an otherwise-tedious technical task while also giving sales teams a real competitive advantage. In this post we look at what it can do, how we’ve implemented it, and how to get started.

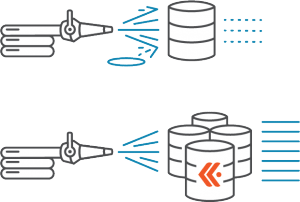

Obsolete architectures for NetFlow analytics may seem merely quaint and old-fashioned, but the harm they can do to your network is no fairy tale. Without real-time, at-scale access to unsummarized traffic data, you can’t fully protect your network from hazards like attacks, performance issues, and excess transit costs. In this post we compare three database approaches to assess the impact of system architecture on network visibility.

Most of the testing and discussion of flow protocols over the years has been based on enterprise use cases and fairly low-bandwidth assumptions. In this post we take a fresh look, focusing instead on the real-world traffic volumes handled by operators of large-scale networks. How do NetFlow and other variants of stateful flow tracking compare with packet sampling approaches like sFlow? Read on…

Not long ago network flow data was a secondary source of data for IT departments trying to better understand their network status, traffic, and utilization. Today it’s become a leading focus of analysis, yielding valuable insights in areas including network security, network optimization, and business processes. In this post, senior analyst Shamus McGillicudy of EMA looks at the value and versatility of flow for network analytics.

“NetFlow” may be the most common short-hand term for network flow data, but that doesn’t mean it’s the only important flow protocol. In fact there are three primary flavors of flow data — NetFlow, sFlow, and IPFIX — as well as a variety of brand-specific names used by various networking vendors. To help clear up any confusion, this post looks at the main flow-data protocols supported by Kentik Detect.

Cisco Live 2016 gave us a chance to meet with BrightTalk for some video-recorded discussions on hot topics in network operations. This post focuses on the first of those videos, in which Kentik’s Jim Frey, VP Strategic Alliances, talks about the complexity of today’s networks and how Big Data NetFlow analysis helps operators achieve timely insight into their traffic.

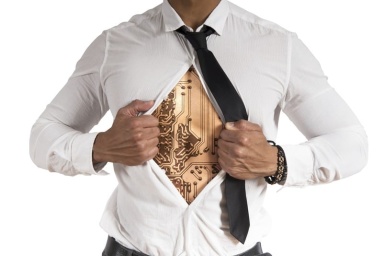

In most digital businesses, network traffic data is everywhere, but legacy limitations on collection, storage, and analysis mean that the value of that data goes largely untapped. Kentik solves that problem with post-Hadoop Big Data analytics, giving network and operations teams the insights they need to boost performance and implement innovation. In this post we look at how the right tools for digging enable organizations to uncover the value that’s lying just beneath the surface.

The plummeting cost of storage and CPU allows us to apply distributed computing technology to network visibility, enabling long-term retention and fast ad hoc querying of metadata. In this post we look at what network metadata actually is and how its applications for everyday network operations — and its benefits for business — are distinct from the national security uses that make the news.

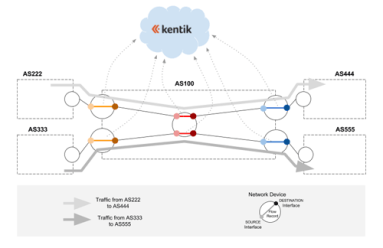

In part 2 of this series, we look at how Big Data in the cloud enables network visibility solutions to finally take full advantage of NetFlow and BGP. Without the constraints of legacy architectures, network data (flow, path, and geo) can be unified and queries covering billions of records can return results in seconds. Meanwhile the centrality of networks to nearly all operations makes state-of-the-art visibility essential for businesses to thrive.

Clear, comprehensive, and timely information is essential for effective network operations. For Internet-related traffic, there’s no better source of that information than NetFlow and BGP. In this series we’ll look at how we got from the first iterations of NetFlow and BGP to the fully realized network visibility systems that can be built around these protocols today.

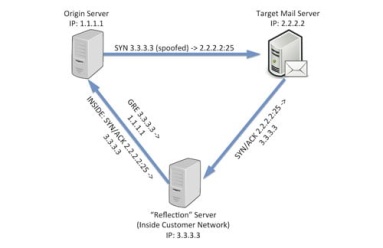

By actively exploring network traffic with Kentik Detect you can reveal attacks and exploits that you haven’t already anticipated in your alerts. In previous posts we showed a range of techniques that help determine whether anomalous traffic indicates that a DDoS attack is underway. This time we dig deeper, gathering the actionable intelligence required to mitigate an attack without disrupting legitimate traffic.

With massive data capacity and analytical flexibility, Kentik Detect makes it easy to actively explore network traffic. In this post we look at how to use this capability to rapidly discover and analyze interesting and potentially important DDoS and other attack vectors. We start with filtering by source geo, then zoom in on a time-span with anomalous traffic. By looking at unique source IPs and grouping traffic by destination IP we find both the source and the target of an attack.

If your network visibility tool lets you query only those flow details that you’ve specified in advance then you’re likely vulnerable to threats that you haven’t anticipated. In this post we’ll explore how SQL querying of Kentik Detect’s unified, full-resolution datastore enables you to drill into traffic anomalies, to identify threats, and to define alerts that notify you when similar issues recur.

DDoS attacks impact profits by interrupting revenue and undermining customer satisfaction. To minimize damage, operators must be able to rapidly determine if a traffic spike is a true attack and then to quickly gather the key information required for mitigation. Kentik Detect’s Data Explorer is ideal for precisely that sort of drill-down.

The team at Kentik recently tweeted: “#Moneyball your network with deeper, real-time insights from #BigData NetFlow, SNMP & BGP in an easy to use #SaaS.” There are a lot of concepts packed into that statement, so we thought it would be worth unpacking for a closer look.

For many of the organizations we’ve all worked with or known, SNMP gets dumped into RRDTool, and NetFlow is captured into MySQL. This arrangement is simple, well-documented, and works for initial requirements. But simply put, it’s not cost-effective to store flow data at any scale in a traditional relational database.

Taken together, three additional attributes of Kentik Detect — self-service, API-enabled, and multi-tenant — further enhance the fundamental advantages of Kentik’s cloud-based big data approach to network visibility.