Summary

The plummeting cost of storage and CPU allows us to apply distributed computing technology to network visibility, enabling long-term retention and fast ad hoc querying of metadata. In this post we look at what network metadata actually is and how its applications for everyday network operations — and its benefits for business — are distinct from the national security uses that make the news.

Making Flow Records a Force for Good on Your Network

Ever since revelations by Edward Snowden raised public awareness about government surveillance and data privacy, the term “metadata” has been tainted with sinister connotations. Regardless of your personal stance on the “war on terror,” it’s hard to overstate the role that telecom metadata has played in intelligence operations. As highlighted in the film “Zero Dark Thirty,” for example, the CIA used mobile phone call records to understand the existence, relationships, and movement of key Al Qaeda players, and eventually put those pieces together to effect the capture of Osama bin Laden.

While metadata’s role in counterterror operations is dramatic, national security is by no means the only or even the most widespread metadata use case. In fact, the network infrastructure of nearly every business that uses IP networks — which these days is effectively everyone — generates volumes of metadata on an hourly basis. Leveraged effectively by network operators, this data (NetFlow, GeoIP, and BGP) can provide valuable operational and business insight. And due to striking advances in distributed computing as well as in disk and CPU economics, you can take advantage of IP metadata without having the financial and personnel resources of the CIA.

What is Metadata?

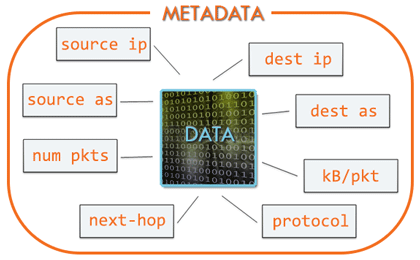

In the literal sense, metadata is “data about data” (as distinct from the underlying data itself). In the context of telecom networks, we’re talking about call detail records (CDR): who talked to whom, when, and for how long. Note that these records do not include the actual contents of the conversations. For IP networks, the metadata is similar except that instead of phone calls the records document packet flows. In a flow record, the “who” and “whom” are IP addresses and port numbers, and the “how long” is byte and packet counts.

Just like with CDRs, in flow records the metadata is external to the actual content of the packets in the flow. There’s much that can be learned from capturing the packets themselves and studying them for deep, direct details on infrastructure and network phenomena. But that also involves a level of technical complexity and expense that in many situations won’t yield much more actionable insight than an effective, comprehensive system for the collection and analysis of metadata in the form of flow records.

One key practical advantage of IP metadata is that it’s often already readily available as a byproduct of some other process (e.g. usage-based billing) from components such as routers and switches that are already installed in the network. It’s also easier and more affordable to collect and store than packet capture (pcap). The biggest advantage of metadata, though, is that it’s pervasive: an always-on stream of information from every part of your network.

Analysis (R)evolution

Network metadata has been available for a relatively long time. For IP networks, we’ve had a couple of decades of NetFlow, with the more recent addition of sFlow, IPFIX, etc. For telecom networks, the CDR has been around much longer than that. Until recently, though, metadata use cases have been limited to non-real-time evidence gathering, like generating predefined traffic summaries or pulling out proof of conversations or connections among known phone numbers or endpoints. The cost of storage meant that the data couldn’t be retained for very long. And the high cost of compute — due largely to the lack of distributed architecture — meant that we couldn’t easily ask ad hoc questions or derive real-time insights with any level of detail.

What’s changed? The cost of disk and CPU have plummeted, and distributed computing technology allows us to aggregate storage and horsepower in ways that are much more powerful than just the “sum of parts.” That enables long-term retention of metadata and fast ad hoc queries. So now we can design systems that allow us not only to get proof of known events and conditions, but also to quickly discover previously unknown actors, events, and conditions within the network. In many cases, fast analysis across pervasive metadata can deliver more value than sparse points of deep packet data surrounded by large gaps in time or location.

Delivering Value

In “Zero Dark Thirty” we saw the CIA use metadata in two ways. First, retroactive analysis of collected metadata allowed them to uncover the existence and identity of unknown individuals in the Al Qaeda network that could potentially lead to bin-Laden. And second, analysis of live metadata allowed them to know the location of those individuals in real-time, and when to launch tactical operations.

Given the right tools, IP network operators can also leverage both retroactive and real-time analysis of network metadata. In the past, diagnosing revenue-impacting events on the network — outages, congestion, attacks, etc. — often required that they be “caught in the act” while the traffic was still present on the network. For operators, that’s an extremely frustrating and time-consuming constraint. But with the ability to perform retroactive analysis of unsummarized network metadata, we can now look back at the finest details of historical traffic, which empowers us to quickly spot patterns and root causes.

The enhanced performance of distributed systems also helps us in the realm of real-time analysis, making it possible to see problems as they develop, to rapidly drill down to areas of concern, and to take corrective action based on informed conclusions. And by applying lessons learned from historical data, we can set up alerts that notify us in real time when similar conditions recur, so that they can be quickly (or even automatically) remediated. (For examples of both retroactive and real-time analysis with Kentik Detect, see Detecting Hidden Spambots).

What’s Holding You Back?

As more and more business operations become enabled by or reliant on IP networks, the actionable insights available from metadata become increasingly critical. Like the analytical wizards who turned mundane baseball statistics into a competitive advantage on the field, the accumulation of continuous improvements that you can make based on network data can help you Moneyball Your Network.

So what’s currently keeping you from deriving the full value of your network metadata? If you’re using legacy network monitoring tools that are based on pre-cloud designs then it’s likely that processing and storage constraints are forcing you to discard most of that value. That’s a shame, because the data you’re throwing away could be used to boost performance, cut costs, and improve ROI. As a network data analysis solution built on state-of-the-art Big Data architecture, the Kentik Network Observability Cloud can help. And because Kentik is an efficient SaaS, the cost of entry is surprisingly low. So if you’re ready to derive maximum value from your network metadata, take us for a test drive. Sign up now for a free 30-day trial.