Synthetic Monitoring vs. Real User Monitoring (RUM): A Complete Comparison Guide

In the world of digital experience monitoring, two methodologies are used to measure how applications and networks actually perform for users: Synthetic Monitoring and Real User Monitoring (RUM). They take fundamentally different approaches — one active, one passive — and the most effective monitoring strategies use both. This guide compares synthetic monitoring vs. RUM, explains where each approach excels, and shows how they work together as a comprehensive digital experience monitoring strategy.

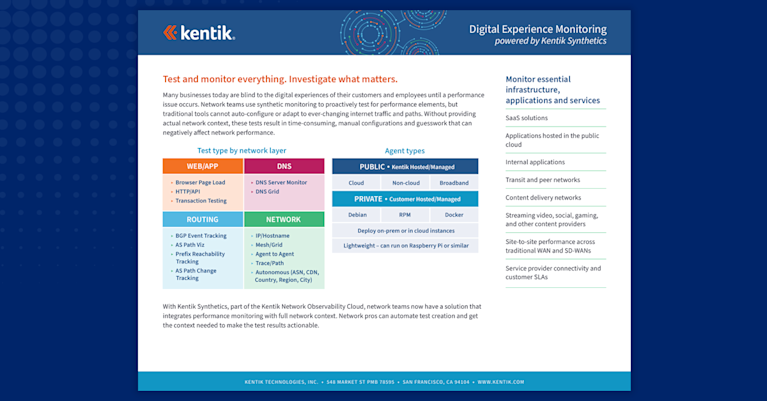

Kentik in brief: Kentik is a network intelligence platform with built-in synthetic monitoring capabilities. Kentik Synthetics proactively tests networks, SaaS applications, APIs, and web pages from a global network of agents and customer-deployed private agents, while real traffic flow data provides the context to determine whether a synthetic test failure is actually affecting users. Kentik is not itself a Real User Monitoring platform — RUM platforms (such as Datadog RUM, New Relic Browser, and Dynatrace Real User Monitoring) instrument client-side application code with JavaScript to capture browser-side telemetry. Kentik complements those tools by providing the network-layer visibility that RUM doesn’t see.

Learn how AI-powered insights help you predict issues, optimize performance, reduce costs, and enhance security.

Synthetic Monitoring vs. RUM at a Glance

- Synthetic monitoring: Active. Scripted tests run on schedules from controlled agents, simulating user behavior to validate performance and availability before real users encounter problems.

- Real User Monitoring (RUM): Passive. JavaScript instrumentation embedded in web applications captures telemetry from real user sessions as they happen.

- Key tradeoff: Synthetic gives you predictable, baseline-able coverage 24/7 even when no real users are active. RUM gives you the unfiltered truth of how real users experience the application, including issues you didn’t think to test for.

- They’re not interchangeable: Each method captures something the other can’t. A complete digital experience monitoring strategy uses both.

- Where Kentik fits: Kentik is a network intelligence platform with strong synthetic monitoring capabilities. It’s not a RUM platform — but its synthetic and flow-based traffic data complement RUM tools by providing the network-layer context RUM doesn’t see.

What is Synthetic Monitoring? Proactive Performance Testing

Synthetic monitoring is an active form of monitoring that simulates user requests to network-accessible resources and measures availability, response time, and other performance metrics. It generates scripted traffic — sometimes called “synthetic transactions” — and sends it to specific targets at regular intervals to verify that applications, APIs, and networks continue to perform as expected.

How synthetic monitoring works

Synthetic monitoring programmatically emulates user behavior using virtual agents that perform predefined sequences of actions. Here’s how it functions in practice:

- Test scenarios are defined. Tests can be simple (load a webpage, hit an API endpoint, resolve a DNS record) or complex (a multi-step purchase journey on an e-commerce site).

- Agents are deployed. Test agents live in geographically distributed locations — public agents managed by the monitoring platform, plus private agents deployed in customer infrastructure (data centers, cloud VPCs, branch offices).

- Synthetic requests are sent. Agents execute their scripts on a regular schedule — anywhere from sub-minute intervals to daily, depending on the test type and the platform.

- Performance metrics are captured. Each test records latency, response time, status codes, error rates, hop-by-hop path data, and other metrics specific to the test type.

- Results are correlated and analyzed. Data flows back to a central platform for analysis, baselining, and alerting.

- Alerts fire on degradation. When a test crosses a configured threshold — latency, jitter, packet loss, HTTP error rate — the platform notifies the appropriate team.

The key advantage is control. Because synthetic tests run in a known environment with known parameters, they produce consistent, comparable results over time. This makes them ideal for baselining, SLA validation, and detecting regressions before real users notice.

Benefits of synthetic monitoring

Synthetic monitoring offers several distinct advantages:

- Proactive detection. Issues are identified before users encounter them, including during off-peak hours when real-traffic monitoring would see nothing.

- Geographic coverage. Tests run from agents around the world, exposing region-specific performance issues that local users wouldn’t surface.

- Consistent baselining. Controlled tests produce repeatable measurements that make it easy to establish baselines and detect drift.

- SLA validation. Continuous tests provide the evidence needed to validate provider SLAs and demonstrate compliance to customers.

- Coverage of unauthenticated paths. Public-facing endpoints, APIs, and DNS can be tested without requiring real user traffic.

What is Real User Monitoring (RUM)? Passive Experience Analysis

Real User Monitoring (RUM) is a passive form of monitoring that captures and analyzes telemetry from actual user sessions as they interact with an application. Rather than simulating users, RUM observes them — recording what each real user experiences during their actual visit.

How RUM works

RUM platforms work by instrumenting the application itself, typically in the browser:

- JavaScript tags are embedded. Small JavaScript snippets (or browser plugins, in some implementations) are added to the application’s web pages. These act as listeners.

- Real user telemetry is captured. As users navigate the application, the JavaScript collects performance data — page load times, transaction times, browser-side errors, mouse interactions, scroll behavior, time-to-interactive, and more.

- Data is segmented and analyzed. Because real-user data is high volume and diverse, RUM platforms segment by geography, device type, browser, user agent, and behavior to surface meaningful patterns.

- Performance is visualized. Dashboards aggregate metrics like average load time, bounce rate, session duration, and conversion rate to give a holistic view of user experience.

- Issues specific to real conditions emerge. RUM surfaces problems that scripted tests would miss — a particular browser/device combination experiencing slow renders, a CDN edge mapping certain users to a degraded PoP, or an A/B test variant tanking conversion rates.

The key advantage is fidelity. RUM doesn’t approximate user experience, but measures it directly. If a slow third-party script is hurting page load for users on slow mobile networks in a specific country, RUM will surface that pattern even if no synthetic test was scripted to look for it.

Benefits of RUM

RUM offers a different and complementary set of advantages:

- Unfiltered ground truth. Captures what real users actually experience, not what tests predict they’ll experience.

- Coverage of unanticipated scenarios. Surfaces issues you didn’t think to script for — unusual browser/device combinations, problematic third-party content, regional ISP quirks.

- Business-relevant metrics. Connects performance data to outcomes like conversion rate, bounce rate, and session duration.

- Continuous, organic coverage. Every real user session contributes telemetry, so coverage scales with traffic.

- A/B test and feature impact. Performance impact of code changes, new features, or experiments can be measured directly against real user behavior.

Synthetic Monitoring vs. RUM: Key Differences

The two approaches differ across several dimensions. The table below summarizes the tradeoffs:

| Dimension | Synthetic Monitoring | Real User Monitoring (RUM) |

|---|---|---|

| Approach | Active — initiates scripted tests | Passive — observes real sessions |

| Data source | Controlled agents in known locations | JavaScript-instrumented user browsers |

| Coverage | Predefined scenarios on a schedule | Whatever real users actually do |

| Strength | Proactive detection, baselining, SLAs | Real-world fidelity, business metrics |

| Weakness | Misses what wasn’t scripted | Depends on real traffic/no proactive coverage |

| Off-peak monitoring | Yes — runs continuously regardless of traffic | No — requires active users to generate data |

| Pre-launch / staging | Yes — runs before public launch | No — requires real users to generate data |

| User segmentation | Limited (agent location only) | Rich (device, browser, geo, demographics) |

| Implementation | Script tests, deploy agents | Embed JavaScript in application |

| Best at answering | “Is the system working right now?” | “What are real users actually experiencing?” |

The tradeoffs aren’t symmetrical. Synthetic monitoring excels where control and predictability matter. RUM excels where fidelity to real-world conditions matters. Most teams need both.

Why teams use both together

Each method covers a blind spot in the other:

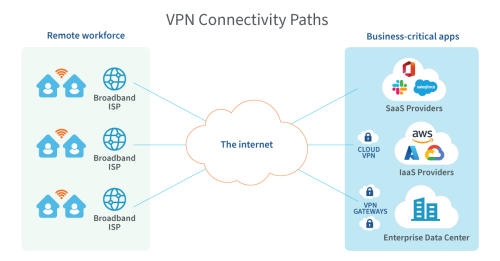

- Synthetic catches what RUM can’t reach. Pre-launch testing, off-peak monitoring, B2B applications with low traffic volume, validation of paths users haven’t taken yet (a new region, a new SaaS provider, a new transit relationship). RUM only sees the sessions that exist, whereas synthetic creates the sessions you need.

- RUM catches what synthetic missed. Issues that only appear under real-world conditions — a Chrome extension that breaks rendering, a Safari version with an unusual TLS bug, a mobile carrier that throttles certain destinations. Synthetic only tests what was scripted while RUM can see everything.

- Together, they triangulate root cause. When users report a slowdown, RUM confirms which segments are affected. Synthetic tests confirm whether the underlying infrastructure is healthy from controlled vantage points. Flow and routing data fills in the why.

How synthetic monitoring and RUM complement network telemetry

RUM and synthetic both measure user experience — but neither, on its own, explains why the experience is what it is. That requires network-layer context: traffic flow data, BGP routing, device telemetry, and path analysis.

Consider a real scenario. RUM surfaces that users in Frankfurt are seeing 3-second page loads on a SaaS dashboard, while the global average is 800ms. Synthetic confirms the slowdown — Kentik agents in Frankfurt show degraded HTTP response times to the same endpoint. Both methods agree there’s a problem, but neither tells you why. The answer comes from network telemetry: BGP route data shows a transit-path change that moved Frankfurt traffic onto a longer AS path two days ago, and flow data confirms the new path correlates with the slowdown.

This is why network-aware DEM is increasingly the standard. Synthetic monitoring tells you that something is degraded. RUM confirms who’s affected. Network telemetry — flow, BGP, device data — explains why and where. Without all three layers, troubleshooting takes far longer because each layer raises questions the others can’t answer.

This is the layer Kentik provides. Kentik isn’t a RUM platform — it doesn’t instrument application code or capture browser-side session telemetry. But Kentik unifies synthetic monitoring with network flow analytics, BGP routing intelligence, and device metrics in a single platform — which makes it the natural network-intelligence complement to RUM tools like Datadog RUM, New Relic Browser, or Dynatrace Real User Monitoring.

Synthetic Monitoring vs. RUM for Complex Web Applications

Modern web applications often span multiple services, third-party dependencies, CDNs, and cloud regions. Synthetic monitoring and RUM each play distinct roles in keeping them performant.

What synthetic monitoring contributes

Synthetic monitoring provides automated, proactive testing that catches issues before they affect real users. For complex web applications, that typically means:

- Testing critical user journeys. Multi-step transactions like login, search, add-to-cart, and checkout can be scripted as Synthetic Transaction Monitoring tests and run continuously, alerting when any step regresses.

- Measuring performance across geographies. Distributed agents reveal region-specific bottlenecks that local testing would miss.

- Monitoring API endpoints. Backend APIs and third-party service integrations can be tested independently of the user-facing application, isolating where failures originate.

- Establishing baselines and SLA validation. Continuous tests provide the historical record needed to detect regression and demonstrate compliance.

What RUM contributes

RUM provides organic, real-world insight that no scripted test can replicate:

- Real user experience data. Actual load times, transaction times, and resource usage as real users encounter them.

- User-segment-specific issues. Problems specific to certain devices, browsers, regions, or demographics — patterns that emerge only at the scale of real traffic.

- Content delivery insight. Performance of images, JavaScript, CSS, and third-party content as actually delivered to users — useful for CDN optimization and front-end performance work.

- Feature and change impact. Direct measurement of how a code release, new feature, or experiment affects real user behavior.

Used together, synthetic monitoring catches issues proactively while RUM confirms which issues are actually affecting users and quantifies the impact.

Synthetic Monitoring vs. RUM for Network Monitoring

Network monitoring is where synthetic tools and network intelligence platforms — like Kentik — do most of their work. RUM has limited but real applications here too.

What synthetic monitoring contributes to network monitoring

Synthetic is the dominant approach for network performance validation:

- Proactive performance checks. Continuous tests measure latency, packet loss, and jitter across critical paths — between data centers, to cloud regions, across SD-WAN underlays, and to internet destinations.

- Latency and packet loss detection. Hop-by-hop traceroute data identifies where degradation occurs along a path, including AS-path-level visibility for internet routing issues.

- Network-device and service availability. Periodic tests against routers, switches, firewalls, DNS servers, and SaaS endpoints validate that infrastructure remains reachable and responsive.

- Baseline establishment and threshold alerting. Continuous synthetic data builds a historical baseline that makes regression detection precise.

What RUM contributes to network monitoring

RUM’s role in network monitoring is narrower but real:

- User-segment network performance. RUM data reveals network-related issues experienced by specific user groups, geographies, or ISPs that synthetic tests might not have been scripted to cover.

- Connecting network performance to user impact. When network telemetry shows a problem, RUM quantifies the user-facing consequence — slower page loads, higher bounce rates, lost conversions.

- CDN performance validation. Real-user data shows how CDN-delivered content actually performs at the edge, which is hard to fully replicate with synthetic tests from a fixed set of agents.

For network teams, synthetic is the primary tool. RUM provides supporting evidence about user-facing impact when working with web-application teams.

When to Use Synthetic Monitoring vs. RUM

The decision isn’t usually “either/or” — it’s “when does each approach earn its place in the stack?” Here’s a practical comparison:

Use synthetic monitoring when you need:

- 24/7 coverage including off-peak hours

- Pre-launch testing before real users exist

- SLA validation and compliance reporting

- Proactive detection of internet-path or routing issues

- Coverage of B2B applications with low traffic volume

- Testing of API and DNS endpoints independently of user-facing code

- Network-layer performance data with hop-by-hop visibility

Use RUM when you need:

- Real user experience data segmented by device, browser, geography, demographics

- Insight into front-end and rendering performance

- Visibility into third-party content and CDN delivery as users actually experience it

- A/B test and feature-release impact measurement

- Conversion-rate and business-metric correlation with performance

- Coverage of unanticipated user behaviors and edge-case scenarios

Use both when you have:

- Customer-facing applications where digital experience drives revenue

- Hybrid or multi-cloud architectures where issues can originate anywhere

- SaaS products where SLA commitments to customers matter

- Engineering organizations large enough to need both proactive alerting (synthetic) and real-world validation (RUM)

For many production applications of any meaningful scale, the answer is “use both.”

Kentik’s role in a synthetic + RUM strategy

Kentik provides the synthetic monitoring side of digital experience monitoring, plus the network telemetry that explains performance issues regardless of how they were detected. Specifically:

- Synthetic monitoring via Kentik Synthetics, with ping, traceroute, HTTP/API, DNS, page load, and Puppeteer-based transaction tests — running from a global network of agents plus customer-deployed private agents.

- Network flow analytics that show real traffic patterns across data centers, cloud, and the internet — the context for why a synthetic test or RUM session degraded.

- BGP routing intelligence that reveals when internet path changes are affecting performance, regardless of whether RUM or synthetic detected the symptom first.

- Device metrics and AI-driven investigation through Kentik AI Advisor, which can reason across synthetic, flow, BGP, and device data to surface root cause.

For RUM, Kentik integrates with the major RUM platforms by complementing their browser-side data with network-layer context. When your RUM tool surfaces that users in a particular region are seeing slow page loads, Kentik shows you whether the issue is in the network path, the BGP routing, the cloud provider’s backbone, or the destination service.

Related Kentipedia articles and Kentik solutions

- What is Digital Experience Monitoring (DEM)?

- What is Synthetic Monitoring?

- What is Synthetic Transaction Monitoring (STM)?

- What is End User Experience Monitoring?

- Network Performance Monitoring

- The Role of Network Observability in Modern APM

- Kentik Synthetics

- Digital Experience Monitoring Solutions

FAQs about Synthetic Monitoring vs. Real User Monitoring

What is the difference between synthetic monitoring and real user monitoring?

Synthetic monitoring is active — scripted tests run on schedules from controlled agents to simulate user behavior and validate performance. Real User Monitoring (RUM) is passive — JavaScript instrumentation captures telemetry from actual user sessions as they happen. Synthetic gives you proactive, controlled coverage while RUM gives you unfiltered real-world fidelity. Most production applications benefit from using both.

Should I use synthetic monitoring or RUM?

In most cases, the answer is both. Synthetic monitoring provides 24/7 baseline coverage and proactive detection — including during off-peak hours when no real users are active, and on paths and endpoints that real users may not yet be exercising. RUM provides ground-truth data about what real users are actually experiencing, including issues you didn’t think to script for. Each catches what the other misses, so a complete digital experience monitoring strategy uses both.

Does Kentik provide RUM (Real User Monitoring)?

Kentik is not a RUM platform. RUM platforms instrument client-side application code with JavaScript to capture browser-side telemetry from real user sessions — examples include Datadog RUM, New Relic Browser, and Dynatrace Real User Monitoring. Kentik provides synthetic monitoring (active testing) and network intelligence (flow, BGP, device telemetry), and complements RUM tools by providing the network-layer context they don’t see.

What tools can continuously test network performance with synthetic probes?

Continuous network performance testing requires synthetic probes that run on short intervals (typically sub-minute to a few minutes) from multiple geographically distributed locations, generating standardized metrics like latency, jitter, and packet loss. Look for platforms that combine ping, traceroute, HTTP, and DNS test types from both public global agents and customer-deployed private agents, with results integrated into the same platform as flow and routing data so failures can be investigated end-to-end. Kentik supports this with continuous probe-based tests from public global agents and private ksynth-agents, with results correlated against real flow data and BGP routing context for fast root-cause investigation.

How do I correlate synthetic monitoring results with real user traffic?

Correlation requires both data sources to live in the same analytics platform with a shared time axis and consistent identifiers (IPs, ASNs, applications). When a synthetic test degrades, real flow data should immediately answer whether real users are also affected — and how many. Kentik supports this by ingesting flow telemetry and synthetic test results into a unified platform, displaying both on the same time-series views, and providing AI-driven investigation that can reason across both data sources.

What’s the best way to measure synthetic uptime versus real traffic availability?

Synthetic uptime measures whether scripted tests succeed while real-traffic availability measures whether actual user requests are completing. Both matter, and the most useful platforms surface them side by side so engineers can quickly see whether a synthetic-test failure is also showing up in real traffic. Kentik supports this by displaying synthetic test results alongside real flow data, and through Autonomous (flow-based) tests that automatically generate synthetic coverage for the destinations real traffic is actually reaching — so synthetic monitoring stays aligned with real user behavior as patterns shift.

How can I test DNS, TLS, and HTTP performance from global agents?

Each layer of the web stack can fail independently, so production-grade synthetic monitoring tests them independently. DNS tests validate resolution time across multiple authoritative and recursive servers, TLS metrics are captured as part of HTTPS test setup, while HTTP and API tests measure status codes, time to last byte, and response size. Kentik supports this with dedicated DNS Server Monitor and DNS Server Grid tests, plus HTTP/API tests that surface DNS resolution time, TLS handshake time, and HTTP response timing as separate metrics.

What methods can verify SaaS availability from multiple geographies?

The most reliable approach is to run continuous synthetic tests against SaaS endpoints from a globally distributed network of agents, measuring DNS resolution, TLS handshake time, HTTP status codes, and end-to-end response time from each location separately. Comparing results across geographies makes it possible to distinguish a provider-wide outage from a regional ISP issue or a single-geography routing problem. Kentik supports this with global agents in major internet hubs and public cloud regions, plus optional private agents for testing from inside corporate networks.

How do I alert on degradation of critical user journeys from key sites?

User-journey alerting requires scripted multi-step tests (login → action → confirmation, for example) running from agents located in the geographies and networks where critical users live, with health thresholds tuned per step rather than only on overall test outcome. Kentik supports this with Puppeteer-based Transaction tests that record and replay user journeys from any agent location, plus configurable per-metric health thresholds that integrate with notification channels like PagerDuty, Slack, and webhooks.

How can I simulate user journeys over different network paths?

Simulating user journeys across different network paths means running the same scripted journey from agents in different geographies, on different ISPs, or behind different cloud regions — then comparing the results. Differences in completion time, error rates, or step-level performance reveal which paths or providers are degrading the user experience. Kentik supports this by letting Transaction tests run from any number of public or private agents simultaneously, with results displayed side by side so path-related performance differences are immediately visible.

How can I test application reachability from private and public agents?

Reachability testing typically combines public agents for outside-in validation with private agents for inside-out and hybrid coverage. Public agents confirm whether your application is reachable from the internet at large. Private agents confirm whether internal users, branch offices, or cloud workloads can reach the same application from their actual network position. Kentik supports both, with the Kentik Global Synthetic Network providing public agents in major internet hubs and customer-deployed ksynth-agents for private agent coverage in data centers, branches, and cloud VPCs.

What’s the best method to measure page load performance due to network?

Network-attributable page load performance comes from two metrics in particular: DNS resolution time and TLS handshake time, both of which depend on network and provider behavior rather than application code. Combine those with traceroute-based path analysis to identify which hops contribute to slowdowns. Kentik supports this with Page Load tests that capture DNS, TLS, navigation, connect, and response timings as separate metrics, plus path views that show the AS-level routing path each test took.

How can I model the impact of a provider outage on user experience?

Provider-outage modeling combines synthetic tests from multiple ISPs and regions (to detect provider-specific degradation) with flow data (to see how much real traffic depends on the affected provider) and BGP data (to see whether routing has shifted). When a provider degrades, this combination tells you which users are affected, which paths can absorb the traffic, and how performance compares across alternatives. Kentik supports this through unified synthetic, flow, and BGP analytics in a single platform.

What features are critical in synthetic transaction monitoring for networks?

For network teams, the most useful Synthetic Transaction Monitoring features go beyond browser-level scripting to include path visibility (so transaction degradation can be traced to specific hops or AS paths), correlation with flow data (so traffic patterns can confirm whether real users are affected), and integration with BGP routing context (so internet-path changes can be correlated with transaction regressions). Kentik supports this with Puppeteer-based Transaction tests integrated into the same platform as flow analytics, BGP monitoring, and path-aware traceroute data.

How can I measure user experience for gaming and real-time apps?

Gaming and real-time apps are highly sensitive to jitter and packet loss, and benefit from continuous network-layer testing along the paths users actually traverse. Deploy agents in the regions and networks where players live, run sub-minute ping and traceroute tests to game servers and matchmaking endpoints, and correlate any degradation with BGP routing changes or transit issues upstream. Kentik supports this with global synthetic agents, customer-deployed private agents, and integrated BGP monitoring for upstream routing context.

Monitor digital experience with Kentik

Kentik is the network intelligence platform that unifies synthetic monitoring with real traffic analytics, BGP routing intelligence, and AI-driven investigation — so you can detect performance issues proactively, understand what’s driving them, and complement your RUM tools with the network-layer context they don’t see.

- Get a demo — See how Kentik combines synthetic monitoring with network intelligence

- Kentik Synthetics — Continuous testing with ping, traceroute, HTTP, DNS, page load, and transaction tests

- Kentik Global Synthetic Network — Hundreds of public agents plus customer-deployed private agents

- Digital Experience Monitoring Solutions — DEM powered by unified synthetic and real traffic data

- Kentik AI Advisor — Investigate performance issues across synthetic, flow, and BGP data with natural language