Why and How to Track Connectivity Costs

Summary

As today’s economy goes online, network costs can be a determinant factor to business success. Failure to strategize and optimize connectivity expenses will naturally result in a loss of competitiveness. Addressing customer needs, Kentik launched a new automated workflow to manage connectivity costs, timely instrument negotiations at contract term, and stay on top of optimization opportunities — all in Kentik’s user-friendly style.

Network Interconnection: Part 2 of our new “Building and Operating Networks” series

“Previously on Network Interconnection…”

This article is the second in a series around building and operating networks. The previous article in the series (Building and Operating Networks: Assuring What Matters) sets the stage and defines the specifics of the most common networks out there, and it lays out a framework that helps benchmark, track and plan for building and operating them.

One of the foundational ambitions of network observability is to provide actionable answers to any question related to your network and cloud infrastructure. These answers are also required to be consumable by a broader audience than the network expert, breaking the boundaries of usual Net/Dev/Ops teams to support modern software engineering, finance and executive teams, to name a few.

As explained previously, all network and cloud infrastructure requires cohesive instrumentation across these three dimensions:

- Performance

- Cost

- Reliability

As a follow-up, today we’re going to focus on the cost aspect of interconnection.

Why Connectivity Costs Are Important

As today’s economy continues to drift towards online, network and cloud infrastructure become an increasing part of any enterprise’s cost of goods and services (COGS). With this in mind, failure to track, plan and optimize them naturally results in loss of competitiveness.

An eyeball network is an ISP with residential subscribers at the content consuming edge of the internet. This type of ISP offers internet access plans to residential subscribers in markets that can be so competitive that the list price for a plan is often dangerously close to the cost of the bandwidth to serve content to the subscribers, and the margins are paper thin.

A content provider network’s profitability almost exclusively relies on their ability to serve subscribers at a cost offset by the monetization of their content. Managing cost versus performance tradeoffs across a potentially global footprint of end users is a key challenge: any investment in local performance must be carefully weighed against the direct ability to monetize it.

For the purpose of this article, we’re only interested in edge connectivity costs. We’ll save backbone infrastructure costs for a later day.

The Hard Thing(s) About Connectivity Costs

Before we dive into how network observability solves for connectivity costs, let’s take a moment to list the roadblocks standing in the way of an efficient cost strategy in today’s networks.

Silos and Tools

One of the fundamental issues with connectivity costs is that they don’t strictly belong anywhere in the company. The network engineering team plans for capacity and provisions interconnections, but the finance team holds the keys to the chest with the executives demanding clear visibility on COGS.

This chasm is aggravated by the lack of a unified tool that can handle all that is involved in managing interconnection costs. In most cases, repositories for the necessary information are separate and rarely interconnected, and any useful computed information requires heavy spreadsheet wrangling to bridge these gaps.

So what do we need to track towards managing connectivity costs? Technically not much. Connectivity is metered by providers based on the maximum of in- or out-traffic on a set of interfaces. With the price per Mbps defined in a contract, just multiply the max traffic value by the unit cost at the end of the month and you’re done.

While theoretically simple, in practice, calculating connectivity cost can become quite the epic quest. The roadblocks for extracting and munging the required data points across different silos can be tedious to overcome due to the following:

1. Documentation of all external interfaces’ traffic counters and providers

SNMP traffic counters from edge devices are used by providers to bill for connectivity. (Note that flow-based billing is usually not used due to imprecisions caused by sampling.)

The usual place for this is either:

- Router configuration repositories

- Any SNMP monitoring system

2. Documentation of all types of interconnections to be able to slice and dice on coherent traffic datasets

Costs need to be evaluated within categories. While useful to track an overall price per Mbps, optimizations are done differently based on the type of interconnectivity (e.g., private or public peering, transit, etc.). This implies the need for additional metadata to qualify interconnection interfaces.

3. Telemetry for all of the edge devices’ external interfaces

Since connectivity costs are mainly measured by traffic volume in or out the network edge devices, we need traffic information for every port involved in external connectivity. As mentioned, SNMP counter data is the preferred method to meter traffic volume. With attention to the important aspect of keeping 5-minute samples as acceptable practice for invoicing.

4. Cost model documentation for every provider contract

Connectivity contracts rely on different rates and models. Some are flat rates for a certain port, some are 9Xth percentile-based, and some are tiered with a wide range of subtle variations. Each of these models usually sits in a contract stored somewhere in the finance silo, most likely inaccessible in any programmatic way.

5. Calendar information such as monthly invoice date or contract term

Lastly, as invoices go by every month, the potential for incorrect provider invoices increases with the amount of providers and contracts, aggravated by the number of providers involved and the difficulty to compute these easily.

As a byproduct, connectivity contracts renewal rarely get the timely attention and traffic history analysis they deserve, resulting in new rates being negotiated without proper instrumentation nor target price goals.

Cost Models Galore

As explained previously, the usual repository for documenting connectivity provider cost is usually the order form sitting in the legal or finance departments. Extracting this metadata to overlay on top of interfaces traffic data usually goes through the magic of spreadsheets. While formulas will help those with high spreadsheet-foo, turning each contract into a formula is a chore, and soon becomes a burden to maintain and use. Moreover, each model in place in the industry can come with significant complexity that just cannot be documented in a formula. Here are some examples:

-

Type of percentile (95th, 98th….) is used on the interface to meter the traffic. The most commonly used percentile is 95th, but certain exceptions exist with more or less tolerant percentiles.

-

Bundled interfaces computation method: The percentile computation model gets more complex when multiple interfaces are under the same contract. Using the “Peak of Sums” method vs. the “Sum of Peaks” method to compute it can lead to very different results, and both are in use in the industry.

-

Tier computation method: This is when connectivity is contracted using traffic tiers with a different price per Mbps for each. Two distinct methods are available to compute monthly costs: adding the costs for each tier completed (blending mode), or only taking into account which tier the interfaces land in at the end of the month (parallel tiering).

-

Additional charges: Often left out of connectivity cost modelling are individual charges that can apply on a given contract or interfaces within the contract. While the price of cross-connects to a peered network may not outweigh the cost of traffic, these are still OPEX entries that add up over the entire edge and as such need to be documented, computed, and tracked accordingly.

All of the above results in the following inefficiencies, ensuring that the cost review exercise will never be performed frequently enough:

- The formula used across each provider is difficult to understand at an eye glance without reading the contract.

- There is no purpose-built tool to document such cost models and keep them up to date.

- It’s difficult to estimate minimum monthly spend for each provider, and it worsens as their number increases.

- An engineer or finance person always needs to manually merge cost data with traffic data.

Network observability allows you to overlay configurable financial models over traffic accounting data without any additional effort needed beyond configuring these models in a very intuitive interface.

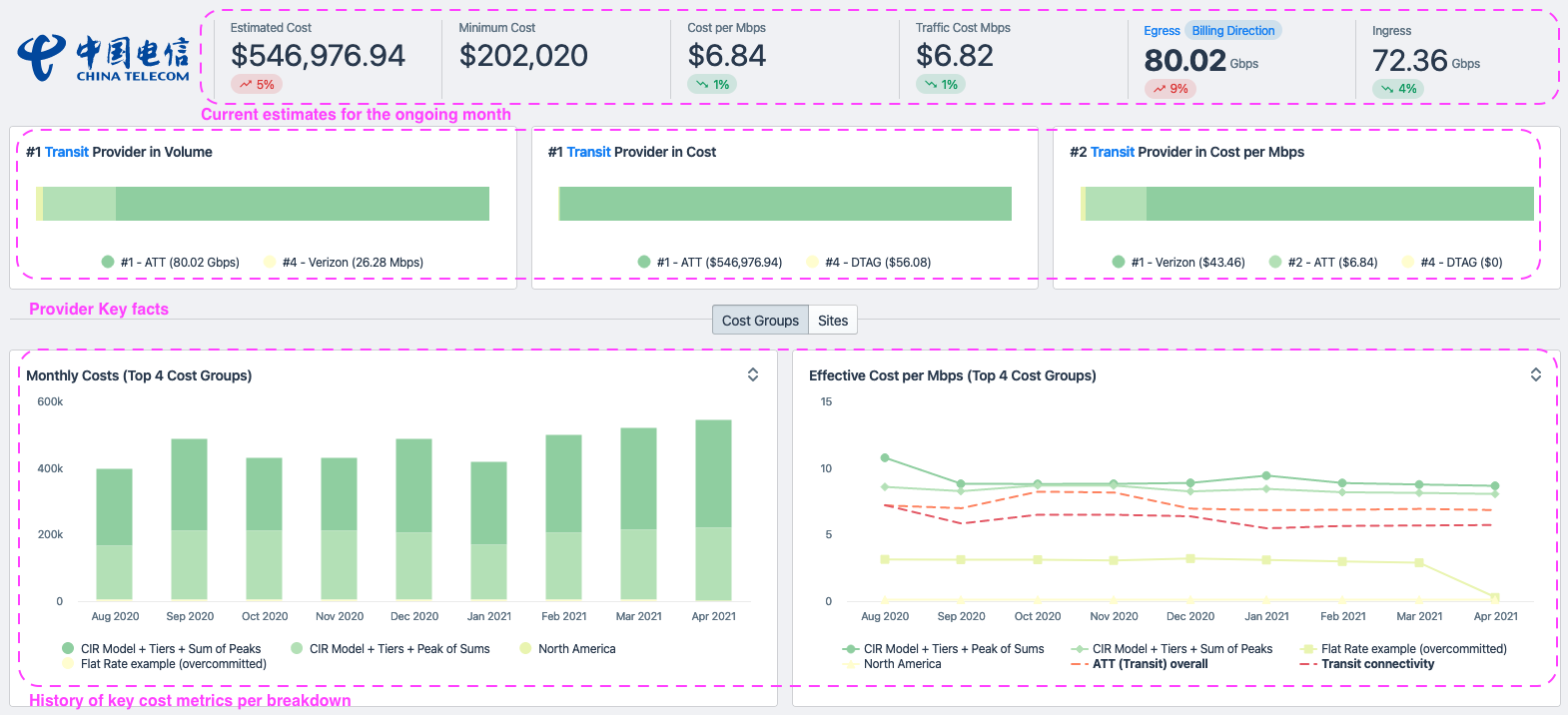

The screenshots below, from the Connectivity Costs workflow update, show models that are easy to understand at an eye’s glance.

Lack of a baseline metric to track and tune

A solid interconnection strategy largely relies on:

- Being able to set goals

- Being able to track progress towards these goals

Both of these require taking the “art” out and keeping the science via simple analytics. Analytical interconnection decision-making requires routinely answering questions such as:

- How does our overall transit cost compare to our peering costs?

- Is it worth it for us to pay for this specific local provider vs. our current transit upstream?

- Cost-wise, how do these two transit providers compare? Should I cancel one? Should I add one?

- How have my costs evolved for this provider over the past X months?

The above can only be achieved by being able to formulate cost per Mbps over any two of those three dimensions: Connectivity type (transit, IX, paid peering, free peering, direct connect…), provider, and points of presence (sites).

Efficient planning and goal setting not only requires being able to ask the system at any moment what a spend and cost per Mbps is for any of these dimensions (connectivity type, provider, and sites), but also to evaluate their evolution against strategic goals.

The below screengrab illustrates how Kentik’s Connectivity Cost workflow provides total monthly spend and cost per Mbps benchmark metrics.

It is also worth noting that if the retention of network telemetry (flow) data is defined by a given contractual window, connectivity costs are not bound to this limitation. Connectivity costs are computed every month and are stored pre-calculated virtually forever, making sure that useful cost history is not lost when out of the flow retention window. This allows for planning and goal tracking over much longer periods, as usually required.

Global connectivity sourcing & currencies

As interconnection grows on the edge of the network and the footprint becomes increasingly global, an additional complexity gets thrown in the mix as a direct byproduct of global connectivity sourcing: currencies and exchange rates.

When the need to source connectivity from local providers arises, procurement may be done in the local currency. Bandwidth procured in bulk then becomes sensitive to variations of exchange rates. Tracking exchange rates requires yet another layer of the software stack needed to track costs together with traffic accounting, cost model documentation, and traffic breakdowns.

Kentik’s newly updated Connectivity Costs workflow relies on an exchange-rate datasource covering all available currencies, ensuring that cost estimates for the ongoing month, as well as cost history for the past months, will always:

- Allow financial planning to consolidate all cost metrics in a single currency, and

- Keep both current month estimates, as well as history, truthful to the exchange rates at the time they are computed.

As shown below, Kentik’s Connectivity Costs workflow now natively addresses multi-currencies.

A cross-functional tool

As connectivity costs are metrics consumed by other teams than network operations, the resulting data is rarely easy to ingest and parse for the non-technical crowd (whether the underlying cost models or the actual metrics). More often than not, a network engineer has to insert themselves in the path to executives or finance to massage the data and make it digestible to non-technical audiences, once more diverting them from building and operating the best infrastructure possible to deliver a given service.

<dramatic_backdrop>

Because of this, network engineers have become the unfortunate gatekeepers of an occult art that they are the only ones to be versed in, requiring their time for endless tedious tasks, while at the same time being on the critical path of information needed to operate the company efficiently.

</dramatic_backdrop>

The good news is that network observability exists specifically to solve these types of problems, and if you go back to its defined mission from the previous blog post, you’ll read:

Network observability’s mandate is to remove the boundaries between teams, tools and methodologies as it pertains to the network. Its ambition is to make it trivial to answer virtually any question about the network and easily understand the answers. Lastly, network observability streamlines the activity of building and running networks.

The Future of Connectivity Costs is Here

Let’s say you are now reaping the benefits of our new Connectivity Costs workflows. You now review connectivity costs regularly, and have even set goals to track. Congratulations!

Think about this: These interconnection cost models are now available to the rest of the workflows. The future is quite bright. All the necessary ingredients are now in place to be able to compute the cost of any slice of traffic coming in or out of your network: It can be different services that you run or offer; it can be different classes of users or customers that you identify with your own Custom Dimensions logic; or even any CDN, OTT traffic…

If, like me, you can’t help but daydream about what comes next to support the performance, cost, or reliability of your infrastructure, start a trial and let’s chat!