This Halloween, Don’t Get Spooked by Cloud Visibility Myths

Summary

To some, moving to the cloud is like a trick. But to others, it’s a real treat. So in the spirit of Halloween, here’s a blog post to break down two of the spookiest (or at least the most common) cloud myths we’ve heard of late.

We’ve heard both sides of the story: To some, moving to the cloud is like a trick. But to others, it’s a real treat. So in the spirit of Halloween, we wanted to break down two of the spookiest (or at least the most common) cloud myths we’ve heard of late. From there, we hope to help you remove the mystery of maintaining visibility, performance, and security — no matter where you are in your cloud journey.

Myth 1: Going to the cloud means you no longer need to worry about your applications or networking there.

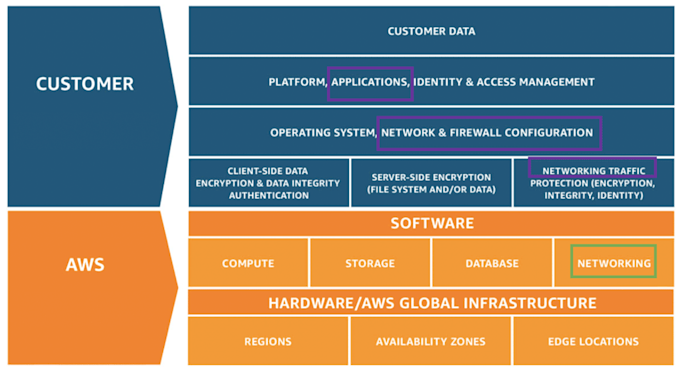

You’ve likely heard of the “Shared Responsibility Model” that every public cloud provider employs today. Take AWS’s model, for example, which we can also apply to other public clouds. In a nutshell, cloud providers’ responsibility is “of the cloud” while a cloud customer’s responsibility is “in the cloud.”

On the one hand, cloud providers like Amazon AWS, Google Cloud, and Microsoft Azure are responsible for protecting the infrastructure that runs all of the services offered in their cloud, which is composed of the hardware, software, networking, and facilities that run the cloud services. On the other hand, these providers require that their customers perform all of the necessary security configurations and management tasks, including on things such as data, applications, operating system, networking configuration / protection, etc. And so, as depicted above on the right, networking is not going away just because of a “cloud” mask, it’ll still be there and in need of you to manage.

Don’t assume you don’t own your cloud environment. That’s a myth. The “Shared Responsibility Model” should remind every cloud architect and operator that it’s an “all or nothing” game. As per the diagram, if a cloud customer does a lousy job of fulfilling their end of the responsibility, no matter how great the cloud providers do on their side, the organization and business are still at huge risk.

Myth 2: You will lose control when you move to cloud.

As a reminder, public cloud promises many great benefits including: deployment agility, operational efficiency, rapid scalability, high performance, and above all else, improved ROI.

Yet, at the same time, it remains true that when you move workloads up to AWS, Google Cloud, Azure, etc., you lose ownership of the hardware, including servers, disks, routers/switches and so on. It is also true that when you operate on your cloud infrastructure, you are actually operating on the “abstracted” layer that the cloud providers make available to you (hey, call it “virtualization”). And finally, it is also true that in your cloud journey, many traditional monitoring / management tools won’t support your modern cloud operations, leaving you clueless when something happens.

Sounds like you’re losing control, right?

Well, losing control at certain levels is not necessarily a bad thing if that serves a business goal (e.g. you don’t want to deal with the overhead of building your own data center). What’s important is keeping a few key questions in mind:

Question 1: What benefit do you want to gain from the cloud (cloud-native architecture)?

Question 2: Are you maximizing those benefits yet? If not, how can you improve this?

That’s where having at least some control is important. That’s also where it becomes critical to know the visibility / control gaps in today’s cloud infrastructure, as that’s typically where organizations typically lose control. So what are the gaps?

Gap 1: Visibility into application performance dependency mapping

Chances are that each of your applications are most likely in a highly distributed system. When one component has issues, it’s hard to identify which service is affected and whether or not other services are experiencing similar issues or changes.

Gap 2: Unified visibility across multiple cloud infrastructure

If you have a hybrid cloud (e.g. private data center and public cloud infrastructure), or multi-public-cloud IaaS (AWS+Azure+GCP+etc.), it makes much more sense from a business perspective to have one operation team to manage it all, rather than hiring multiple teams with various expertise to handle one of each and create silos. However, with digital infrastructure growing and constantly changing, having just one team do it all can create visibility gaps, particularly from a resources and expertise standpoint.

Gap 3: Visibility into bandwidth and usage cost for cloud bills

One key reason many enterprises are adopting public cloud is to cut IT bills. However, if you don’t architect your cloud infrastructure wisely, you can end up spending even more than before.

Regardless of the gaps, it’s a myth that you have to lose visibility and therefore control entirely when you’re in the cloud. Here are three important points to remember:

Complete visibility across workloads in your cloud environment is a must. Moving up to the cloud doesn’t mean you should trust anyone and assume everything runs safely. We recommend you to take the opposite approach to be more cautious and make sure to have complete visibility across your entire cloud environment.

Proactively team up with your cloud provider(s) to gain the visibility they own. The fact that Amazon, Microsoft and Google offer VPC Flow Logs is a validation for this. Many cloud customers craved for underlying flow data visibility because it unlocks deeper visibility that matters to the business. And cloud giants have recently acknowledged this importance.

Find a cutting-edge tool that fits your cloud infrastructure and your business. Avoid the haunted house of traditional metrics from legacy vendors. Real-time, modern cloud visibility from Kentik allows you to see, run, and protect your infrastructure (wherever it may be) and your business.

Don’t be spooked by the cloud. It is possible to maintain visibility into your infrastructure. See for yourself in a new video on how we’re reinventing network analytics or sign up for a demo.

Also, don’t miss the whitepaper ”AWS Cloud Adoption, Visibility & Management,” where we look at the survey responses of 310 technical and executive-level peers who attended the 2018 AWS user conference.