Synthetics 101 - Part 1: How to drive better business outcomes

Summary

Synthetic monitoring can help you proactively track the performance and health of your networks, applications and services. In the first post of this multi-part series, Kentik’s Anil Murty will share how synthetic monitoring can also help you drive better business outcomes.

In this multi-part blog series, I’ll help you learn how you can use synthetic monitoring to proactively track the performance and health of your networks, applications and services — with the ultimate goal of helping you drive real business outcomes.

I’ll start with a high-level overview of what synthetic monitoring is and explain how it has seen a growth in adoption as a direct consequence of changes in the way applications and services are delivered and where they are consumed. I’ll also get into the most common use cases for synthetic monitoring (gleaned from hundreds of customer conversations), and I’ll share how the analytics gained from it can be directly tied to Key Performance Indicators (KPIs) in each of those cases.

Finally, I will follow up with several individual posts in the weeks and months ahead to delve into detail for some of the use cases outlined here. Where applicable, I’ll also show you how to use the Kentik platform to meet objectives in each situation.

What is Synthetic Monitoring?

The Miriam-Webster definition of the word “synthetic” defines it as something that is “produced artificially” and “devised, arranged or fabricated to imitate or replace usual realities.” I find that definition to reflect the general idea of synthetic monitoring pretty well, in terms of its high-level implementation. Specifically, in the networking context, synthetic monitoring is all about imitating different network conditions and/or simulating different user conditions and behaviors.

Synthetic monitoring imitates:

- Network traffic types

- Layer-2/3, web, audio, video…

- Network conditions

- Fully loaded, partially loaded

- One route vs. another

- User locations

- Works from San Francisco, but does it work from Auckland, NZ?

- User actions

- Reading a news site

- Logging into an application

- Checking out a shopping cart

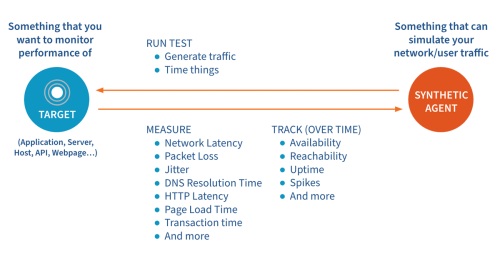

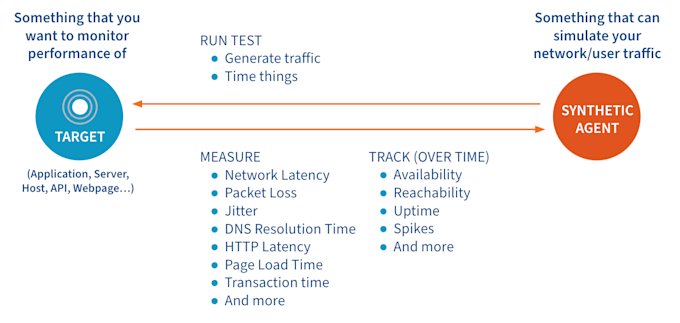

Synthetic monitoring achieves this by generating different types of traffic (e.g., network, DNS, HTTP, web, etc.), sending it to a specific target (e.g., IP address, server, host, web page, etc.), measuring metrics associated with that “test” and then building KPIs using those metrics.

Why Imitate (Or Why Monitor Synthetically)?

To understand the need to simulate network and user conditions, it is important to consider the underlying broader market changes that have created the need for it. Over the last decade, the way that applications and services are delivered and consumed has been significantly disrupted by four key trends:

- The mainstream adoption of public cloud services driven by the increased flexibility, low capex costs and overall quality of service (in terms of availability and uptime) they provide.

- The move from delivering applications as binary images installed on hardware owned by customers to applications delivered as services (SaaS).

- The desire to consume applications and services from different parts of the world and on-the-go, driven by advances in technology enabling high-bandwidth applications on consumer devices.

- The recent trend of more and more companies offering the flexibility to work from home (or from anywhere in the world).

If there is one thing that those four shifts have in common, it is an increased dependence on the network and the general internet in order to achieve their objective. To drive this point home, I like to use a quote I heard during a recent customer conversation:

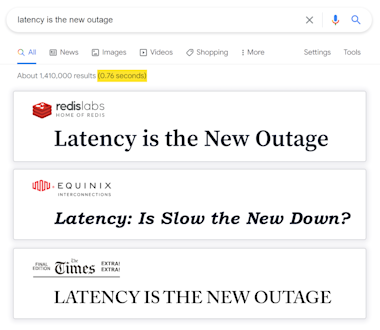

“Latency is the new outage.”

When I heard that the first time, I went to google it and was pleasantly surprised that not only are some pretty big-name companies talking about it, but more importantly, latency as a metric is something that even Google (as dominant as it is in the search business) is paranoid enough about to list right there in its search results.

If I had to sum all that up in one sentence, I’d say that the shift to delivering “Anything as-a-Service” to anyone, anywhere in the world, and expecting it to perform the way it would if it were being delivered within the same building, is what has been the biggest driver of the reliance on networks.

As a consequence, it has led to the growth in the use of synthetic monitoring. Specifically, being able to simulate dynamic and varied network conditions that reflect the nature of today’s network traffic and being able to visualize, debug and pinpoint the root of issues when they occur is where synthetic monitoring earns its money.

Now let’s get into the value of synthetic monitoring to specific business use cases with the help of some real-world examples.

Tying It to Business Outcomes

In building Kentik Synthetics, our team spoke with well over one-hundred customers who included service providers, digital enterprises (SRE and DevOps teams) and corporate IT teams. I’ve distilled our learnings from those conversations into the following business-impacting use cases for synthetic monitoring:

-

Proactive monitoring: Regardless of the type of business you run, you never want to have a customer tell you something in your product or service experience is broken or unavailable. To that end, a tool that can give you a view of the customer’s experience on an ongoing basis and notify you the moment it suffers is invaluable. This is the fundamental use case that synthetic monitoring addresses - tracking the uptime, availability, reachability and overall end-user experience of critical services that your customers pay you for.

-

Holding third-party vendors / service providers / partners accountable: If you are an ecommerce company providing a shopping-cart checkout experience, you want to make sure there is as little friction in users going through it as possible. Unfortunately, today’s web services rely on tens, if not hundreds of calls to external services (like payment processors, third-party sellers, shopping platforms, etc.) as part of their implementation. Synthetic monitoring can help you disambiguate this by telling you if the issue is with the network or the application, and further, if it is in a service owned by you or by an external service provider.

-

Benchmarking and baselining performance: Service providers compete based on the performance they provide to their customers. What better way to prove your level of service than to compare your performance with that of your competitors and present it with hard numbers? Another use case for baselining performance could be for comparing network performance in evaluating different SD-WAN solutions.

-

Preparing for significant network changes or transitions: Has your company made a strategic decision to enter a new market? What if it isn’t a strategic decision but rather an acquisition that is causing consolidation of infrastructure? Wouldn’t it be nice to have the confidence before you “flip the switch” to move real traffic over so that things won’t go completely haywire? Alternatively, if your company has made a strategic decision to move to the public cloud, wouldn’t it be nice to know what the impact of moving a specific service or a portion of it from your on-premises data center to the public cloud would be - before you switched your user traffic to it?

-

Measuring and adhering to SLAs / SLOs: Are you a service provider delivering hosting services through a worldwide network of points-of-presence (PoPs)? Do you care about monitoring connectivity and performance between these sites? Do your customers ask you about the level of service you are providing them? These are things you can achieve using synthetic monitoring.

-

Intelligent route optimization: Service providers and network operators often want to be able to make routing and interconnection decisions based on cost and performance. Running synthetic monitors along transit and peering routes and analyzing the results programmatically can help you automate some of this decision-making.

Are one or more of the above use cases crucial to your business? If so, definitely stay tuned for the follow-up posts in this series. I will delve into each use case in detail and show you how Kentik can help you address your key pain points along the way. Are there other use cases that we have not outlined? If so, we would love to work with you to address those with synthetics.

Kentik Synthetics

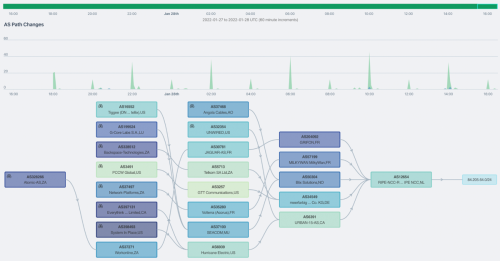

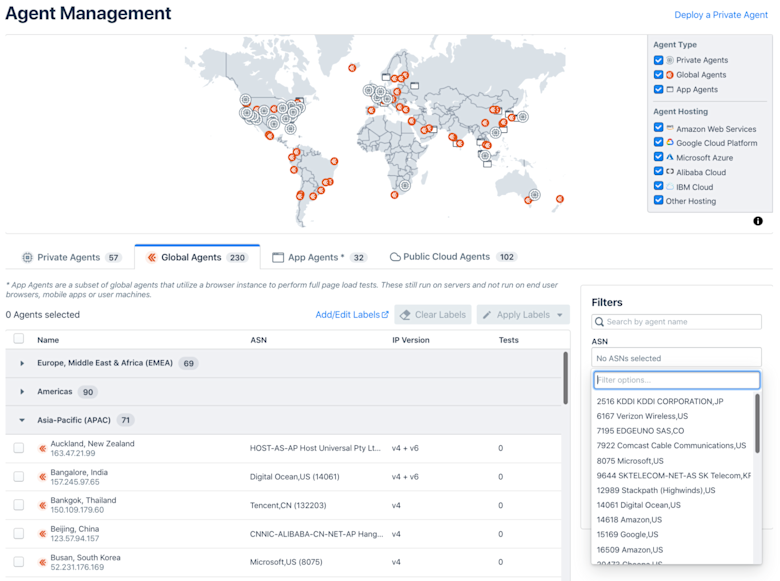

Kentik Synthetics provides you hundreds of hosted agents located across different geographic regions and provider networks (ASes) that are capable of performing the tests that enable you to address the above use cases. You also have the option of running these agents on your own infrastructure and/or within your own networks (as private agents).

If you don’t want to wait for the next post in this series to learn more, you can get started with Kentik or reach out to us and we’ll show you a demo of the product.