Hybrid vs. Multi-cloud: The Good, the Bad and the Network Observability Needed

Summary

How do you pinpoint latency problems between systems in a hybrid or multi-cloud environment? It requires insight into the complete path, end-to-end, hop-by-hop.

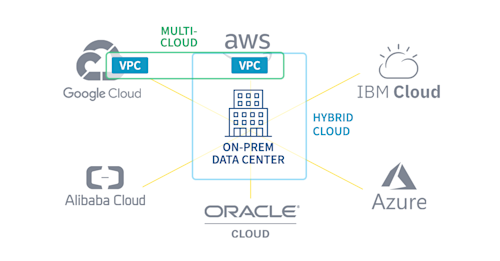

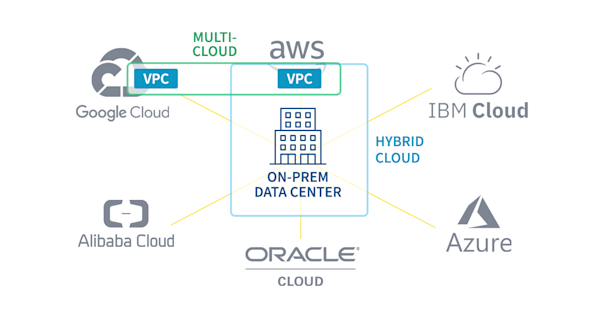

Understanding the difference between hybrid cloud and multi-cloud is pretty simple. Though, if you’re still a bit cloudy on the topic, I’ll use just a few words to clear it up. Below is a hypothetical company with its data center in the center of the building. The public clouds (representing Google, AWS, IBM, Azure, Alibaba and Oracle) are all readily available. Outlined in light blue is the hybrid cloud which includes the on-premises network, as well as the virtual public cloud (VPC) in the AWS public cloud.

If a company has two or more VPCs, either in the same cloud or in different clouds, this is considered a multi-cloud, as outlined above in green.

Hybrid Cloud Benefits

Some of the biggest benefits when adopting a hybrid-cloud configuration are:

-

Applications in the cloud often have greater redundancy and elasticity. This allows DevOps teams to configure the application to increase or decrease the amount of system capacity, like CPU, storage, memory and input/output bandwidth, all on-demand.

-

Moving to the cloud can also increase performance. Many companies find it is frequently CAPEX-prohibitive to reach the same performance objectives offered by the cloud by hosting the application on-premises.

Multi-cloud Benefits

Companies take advantage of multiple clouds for a few reasons:

- Different cloud providers are better at different services. For example, some DevOps teams feel that AWS is more ideal for infrastructure services such as DNS services and load balancing. Google, on the other hand, might be a better platform for machine-learning computations.

- CAPEX fees and proximity to end-users can also be a factor.

- Organizations may intentionally use multiple cloud providers to mitigate risk, in the event that one provider has a major outage.

VPCs and Security

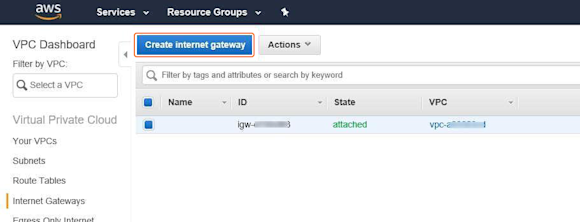

Cloud does not equal internet. In both hybrid and multi-cloud configurations, all of the customer data stays private and cannot be accessed via the internet unless the network team chooses to do so. Below you can see how easy it is in AWS to select a VPC and then click a button to “Create internet gateway” in order to grant internet access.

If a customer-facing application is moved to the cloud in order to improve performance, a direct internet connection using a gateway can be put in place. This conserves bandwidth on the corporate internet connection.

It is, however, sometimes necessary to set up a connection between VPCs in order to allow workloads to communicate. For example, perhaps the systems in AWS need to send data to the VPC in Google Cloud in order to perform AI operations. When this connection is provisioned, can we just trust the connection? How can we be sure that there is ample bandwidth? This connection involves networks that we have no control over and no network traffic observability. There are, however, ways to monitor the performance.

But Cloud Adoption Can Introduce Problems

The adoption of these new cloud infrastructures has helped many companies improve the availability and response times of their applications for end-users. Cloud providers have made it easy to configure infrastructure-as-a-service, including the network constructs. Application developers can easily change network configurations. This ease can create problems such as unintentionally routing traffic to the internet, introducing unnecessary risks, costs and performance reductions. And sometimes, the application developers don’t involve the network teams until there is a problem. When the network team investigates, they may see all types of problems such as ingress and egress points with no security policies, internal communications routed over internet gateways, abandoned gateways, abandoned subnets with overlapping IP address space, or VPC peering connections with asymmetric routing policies.

At the same time, the move to cloud has impaired the ability of legacy traffic monitoring tools to troubleshoot latency problems between systems. This is because much like an on-premises network, each cloud is a restricted network full of routers, switches and servers that can stretch the entire globe. Without the use of a cloud-aware traffic observability platform, how your organization’s traffic traverses each vendor’s cloud is a mystery.

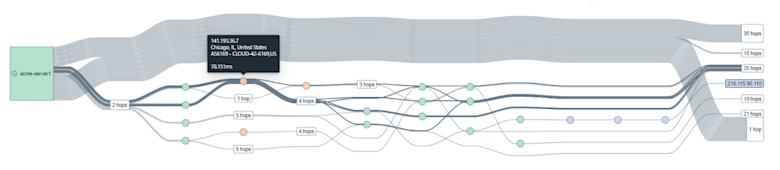

Regaining hop-by-hop network observability within hybrid and multi-clouds can be done through the use of synthetic monitors. These lightweight and efficient software agents are great for proactively keeping tabs on cloud network performance by providing details on things like latency, packet loss and jitter. Furthermore, they provide IT operations with an understanding of the routed paths taken through the hybrid and multi-cloud networks combined.

Network Observability for All Clouds

A network observability platform optimized for hybrid and multi-cloud networks will combine data from synthetic monitors with routes received from BGP. The marriage between these two forms of telemetry allow NetOps to isolate congestion issues for a specific connection down to individual peers, internet exchange points or content delivery networks (CDNs) that are causing unacceptable latency issues.

With the right platform for proactive monitoring of hybrid and multi-cloud networks, details on exactly where the traffic was impacted can be identified. This, in turn, allows members of the NetOps team to take corrective measures.

To learn more about network observability into your hybrid and multi-cloud network, start a free trial today.