A Guide to Cloud Monitoring Through Synthetic Testing

Summary

The first in a three-part guide series explaining the process of using continuous synthetic testing as a comprehensive cloud monitoring solution to find problems before they start.

A three-part guide to assuring performance and availability of critical cloud services across public and hybrid clouds and the internet

What is cloud monitoring?

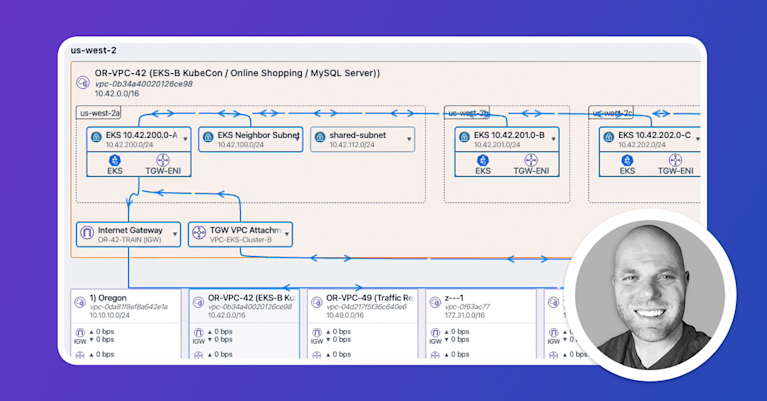

As the world moves to utilize cloud-based resources to be more nimble and scale its IT infrastructure, the challenge of cloud monitoring has emerged. Cloud monitoring involves observing and managing operational workflows in a cloud-based IT infrastructure. Monitoring should be designed to confirm the availability and performance of websites, servers, VPC containers, gateways, load balancers, applications, and other cloud infrastructures such as Kubernetes deployments.

Cloud monitoring should encompass the monitoring of actual traffic and also test traffic. This guide focuses on monitoring test traffic using synthetic testing.

What are some types of cloud monitoring?

Networking in and with the cloud involves managing the interconnections of a wide array of devices, services, and applications. You’ll want to test your connection to and the performance of traffic flow from your website and applications through your new and existing cloud resources.

Find a tool that enables you to monitor AWS, Microsoft Azure, and Google Cloud to perform website monitoring, virtual machine monitoring, database monitoring, virtual network monitoring, cloud storage monitoring, and see traffic flows between and within your cloud resources.

With synthetic monitoring, you can see traffic flows between and within pods deployed on Kubernetes clusters. This lets you provide an integrated view allowing you to identify performance problems before they impact users. Synthetic testing enables you to monitor and drill into cloud and data center environments, troubleshoot VPC flows, subnets, east-west traffic, and check device interfaces.

Why cloud monitoring through continuous testing matters

Find problems before your users do

Continuous synthetic monitoring of your cloud environment ensures that you can detect availability and performance problems before they impact end users. Whether the issues are caused by services you have deployed yourself or from a third-party resource, that’s true. From the customer’s perspective, the difference doesn’t matter.

Synthetic monitoring also helps catch issues that affect only certain users — such as those in a specific geography. And it allows you to test how an application update or an architecture change, such as migration to the cloud or the adoption of a CDN, affects performance before you deploy the changes to customers.

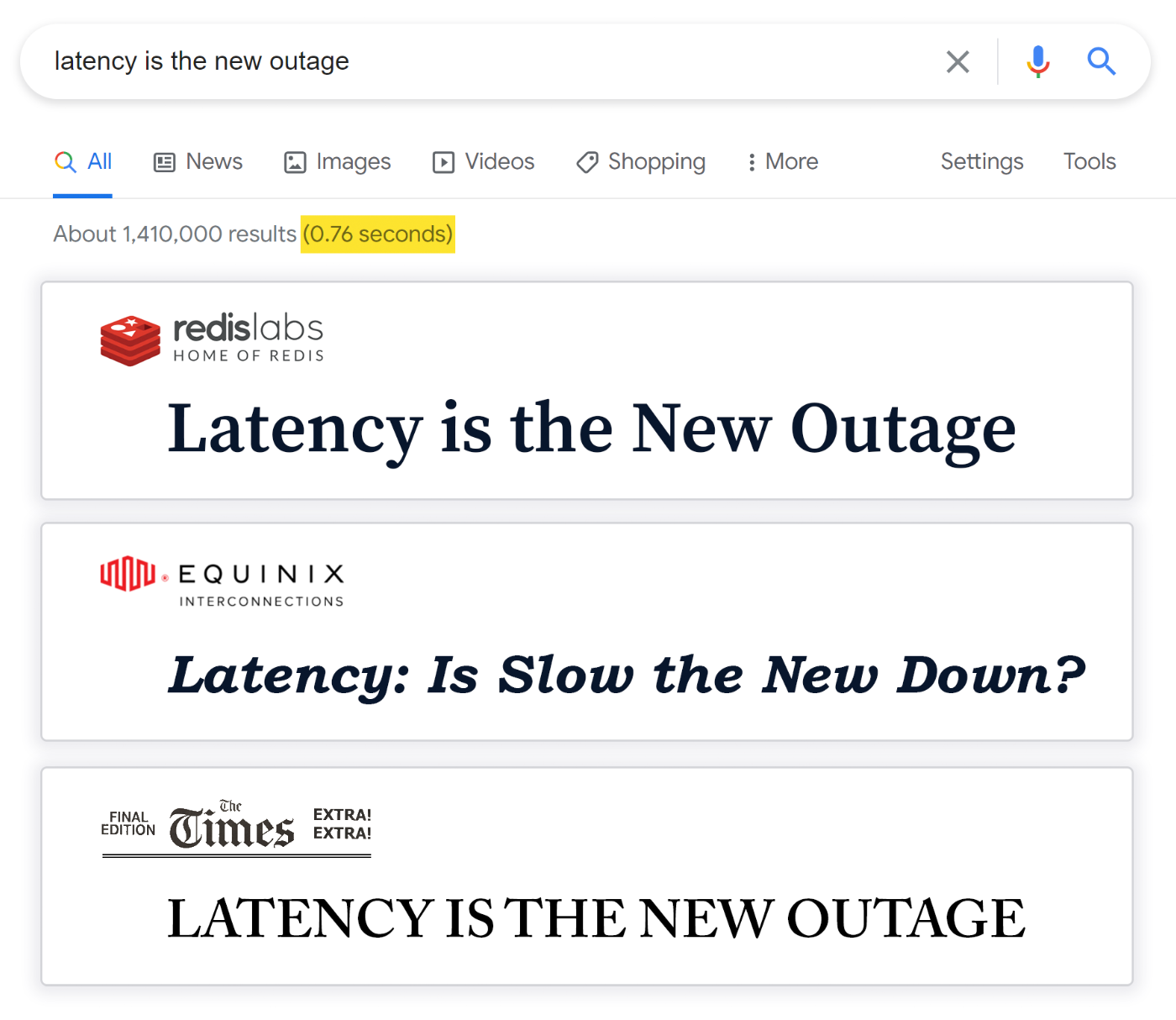

In short, cloud monitoring continuously helps you ensure the smooth digital experience that users expect in today’s world, where subsecond delays or short periods of unavailability can break SLAs. It also provides the reports you need to validate SLA adherence.

The cloud is not a singular entity

It’s a sprawling, ever-changing stack of disparate services, infrastructure, applications, and other resources that need continuous monitoring to guarantee operational excellence.

From API gateways and WANs to public-facing SaaS apps and private LOB apps, to CDNs and transit networks and beyond, any wrinkle in the complex set of technologies that power your cloud will be felt by users — unless you catch it first via synthetic monitoring.

That’s why frequent, continuous, and systematic monitoring of all resources within your cloud environment is critical for guaranteeing the digital experience that your company promises to customers.

How active cloud monitoring helps you solve problems before they start

Understanding what is happening in a modern environment requires autonomous monitoring — meaning it happens automatically — and active, which means proactively sending synthetic traffic across your environment to track the response.

Without an autonomous approach, orchestrating monitoring and testing for large-scale applications consisting of dozens of microservices that may be distributed across multiple clouds simply isn’t feasible.

And without active monitoring, you’re stuck waiting on user-generated traffic flows before you can detect and respond to problems. You struggle to catch issues that affect only certain geographies, for example, or that involve services that aren’t part of typical flows.

Active (synthetic) monitoring puts you in control. Stop waiting passively for problems to arise. Start finding and fixing actively.

Next up: Synthetic testing

Synthetic testing provides insights about what will happen if conditions change. It’s a means of future-proofing your network as you add DNS servers, add new cloud regions or providers, integrate new third-party services, or start supporting users based in a new region. It also offers a preview of what might result if a cloud provider has an outage or one of your data centers goes down. In short, synthetic testing keeps you prepared for the future and ready to react, while flow/actual traffic monitoring ensures you catch issues that slip past your synthetic testing safeguards. Together, they enable network observability.

Stay tuned for our next post in this series: Everything You Need to Know About Synthetic Testing.