Managing the hidden costs of cloud networking - Part 2

Summary

Companies considering cloud adoption should ensure that they take these valuable lessons into account to avoid hidden cloud costs.

In the first post of this series, I detailed ways companies considering cloud adoption can achieve quick wins in performance and cost savings. While these benefits of the cloud certainly remain true in theory, realizing these benefits in practice can be increasingly difficult as applications and their networks become more complex.

To illustrate, I’d like to talk about my time as a network engineer at Company X. The beginning of the company’s foray into the cloud stemmed from a hardware expansion of several racks worth of equipment to support new SMS capabilities. As these SMS capabilities were released into the product suite, the expenditure on the hardware proved to be overkill, ultimately resulting in the equipment being repurposed for other business needs.

This marked an important transition for the company. From there on out, our development teams were instructed to build all new services in the cloud, with the mandate that the newly delivered applications function in both our cloud and data center.

In this post, I will focus on cost management lessons learned from two of those cloud projects:

- a geographically distributed service

- a network data aggregation tool

Hidden performance costs

My first introduction to applications running in a distributed environment was one of our large, internal, Software-as-a-Service (SaaS)-based applications, which we ran on specific hardware in San Diego, CA. Around this time, the Southwest was experiencing rolling and persistent power outages, so we decided to host the Identity/Authorization (IAM) service for this application in the Pacific Northwest.

After making the switch, customer logon latencies surged from 11ms to around 1.2 seconds, which led to painful timeouts and a poor overall user experience.

Was this a network issue or a coding issue?

Technically, it was both. The request latency was appropriate from a network perspective, given the physical distance between the two data centers.

So, while not really a developer-caused issue, it was definitely going to be a developer-caused solution: take into account the new networking constraints presented by geographically distributed requests, and make the appropriate changes to request timers, acknowledgment of the delay in the UI, etc.

This experience taught me that it is vital for teams that transition to distributed solutions to consider these extra performance dimensions.

Troubleshooting cloud networks

The IAM service example was straightforward, but imagine this misstep between dev and networking teams as a building block in what can potentially be a much larger issue in a distributed application at scale.

To help engineers avoid these missteps, cloud platforms and infrastructure as code (IaC) have emerged as valuable tools for programmatically managing this networking complexity. They have also introduced a reasonably significant redistribution of networking responsibilities. With IaC, networking decisions are not just made by network engineers, but by devs and DevOps engineers, to the effect that these decisions are often being made independently across various teams with various scopes and priorities. In its ideal state, these loosely-coupled decisions allow these teams to run their code in a (miraculously) harmonized network, where the actions of one don’t affect the other.

But, unfortunately, reality is not the ideal state. So, where do I look when things go wrong? With more networking decisions being made via IaC, DNS often becomes the culprit, as it is responsible for selecting the path, firewall, load balancer, caching, and fault-tolerant paths; all of which must be considered, configured in IaC, and deployed correctly.

Hidden financial costs

Near the end of my ten years at Company X, we were building a data aggregator to help us identify and isolate networking issues while providing the information we needed to answer our most asked questions:

- Is the request communicating to internal or external services?

- What path is the request taking through the network?

- How is DNS involved in the request?

- Is the request interacting with any firewalls? If so, L3? L4? L7?

- How is the request affecting the network’s load balancers?

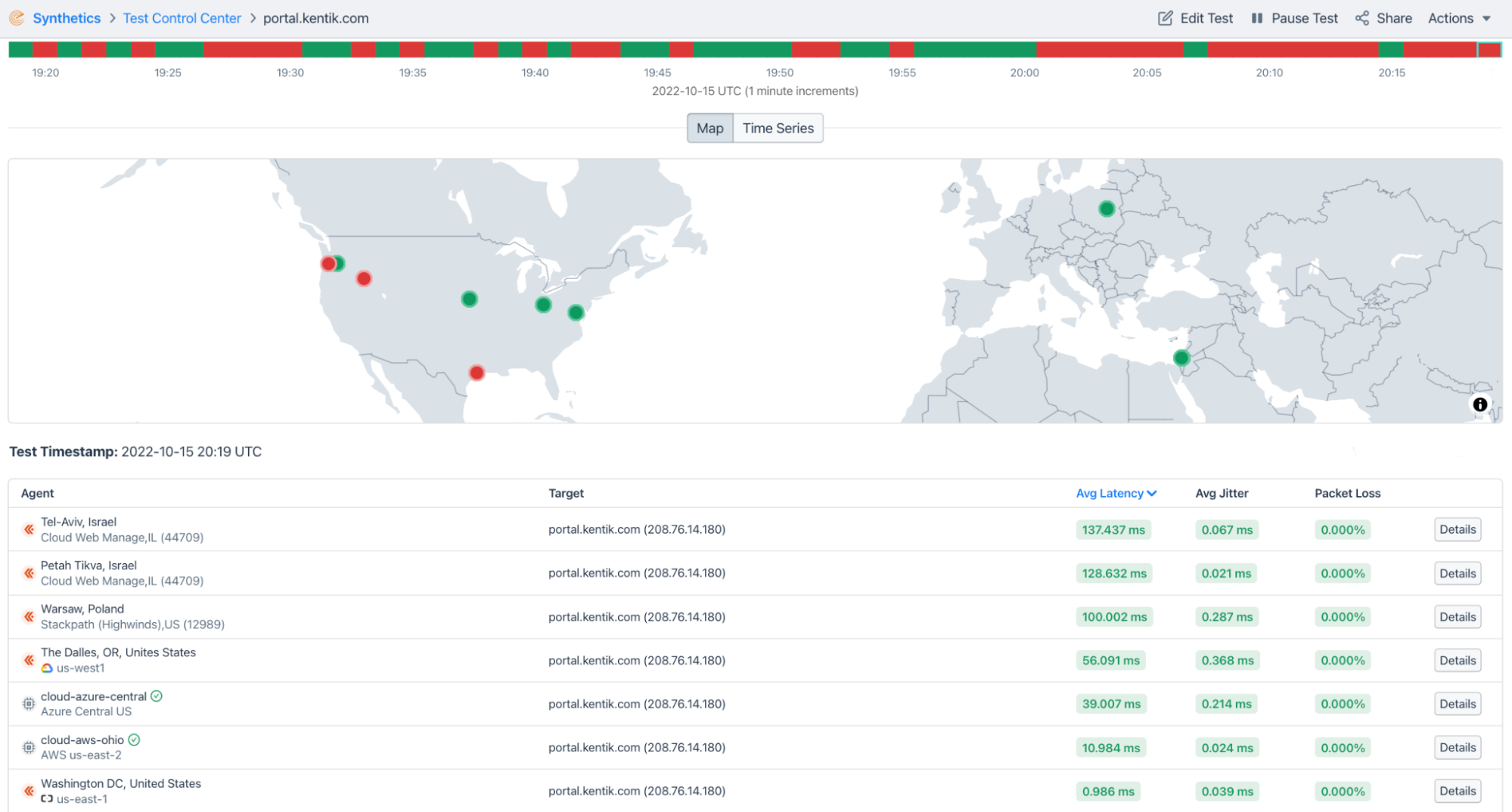

- Alongside the latency, is the request experiencing any packet loss or jitter?

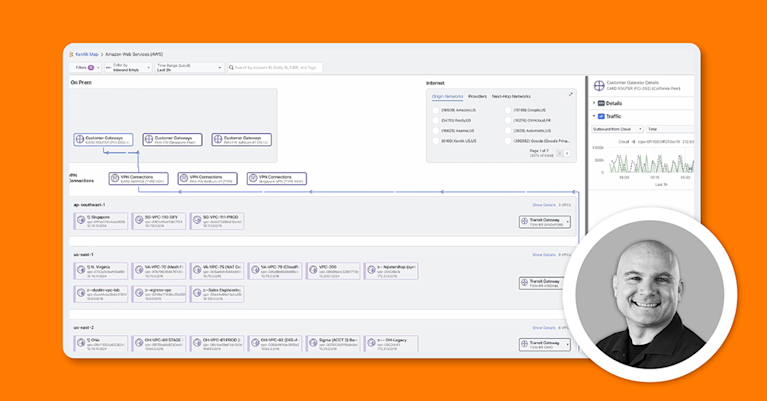

To do this, we needed to be able to ingest trillions of records, standardize data formatting and tagging across multiple metric streams, compress this data, and ultimately deposit it onto a “single pane of glass” visualization tool.

After deploying the tool, we started looking for any serious performance issues. Did the single pane of glass accurately represent the detailed metrics we were forwarding? Did the data arrive in a timely fashion? Could we use the system to troubleshoot faster? These are all excellent questions that we needed to answer.

A question we did not expect, however, was an inquiry from the finance team asking why our DEV project (that’s right, not even in production yet) was costing $40,000 per month. Yikes!

Upon further inspection, it seemed that there was a code-driven networking issue this time. We were always calling the publicly available name instead of the internal names/IP addresses, creating lots of unnecessary outbound charges.

We were able to cheaply fix this by providing a simple checkbox in the UI to select private versus public addresses.

All this to say, moving massive amounts of data in a cloud or hybrid-cloud environment can be very costly:

- Sending traffic from a cloud back into campus or DC costs money, whereas this type of traffic was previously free.

- Sending traffic from a cloud, across the public internet, back to a cloud resource incurs outbound charges, even though “everything is running in the cloud.”

With the line between app development and networking so blurred in the cloud, sometimes, a single line of code can lead to dramatic networking consequences. Having visibility into your network can help you track performance and financial costs quickly, even as network traffic and complexity grow.

Don’t miss Part III of this series, where I outline how to organize cloud networks and teams best to facilitate high-velocity development that provides top value.