Summary

Enterprises are pouring billions into GPUs and AI compute, but most are overlooking the infrastructure that connects it all. Justin Ryburn, field CTO at Kentik, makes the case that the network is the most underestimated variable in whether AI initiatives succeed or fail.

Every enterprise AI roadmap starts the same way. Pick a use case. Secure the GPUs. Hire the ML talent. Build the data pipeline. Deploy the model.

What almost nobody talks about is the infrastructure that connects it all together: the network.

That oversight is costing companies millions of dollars in wasted GPU time, failed deployments, and AI initiatives that stall before they ever reach production.

The GPU gold rush created a networking problem

Over the past two years, enterprises have poured unprecedented capital into AI infrastructure. Global AI investment hit $684 billion in 2025, with much of it going toward GPU clusters, cloud compute, and model development. The assumption was straightforward: secure enough processing power, and the rest will fall into place.

That assumption is breaking down. A recent Gartner survey of 782 infrastructure and operations leaders found that only 28% of AI use cases fully succeed and meet ROI targets. One in five fails outright. The causes vary, but many share a root issue: the infrastructure wasn’t ready for what AI needs.

And what AI needs from the network looks nothing like what came before.

AI traffic doesn’t behave like traditional traffic

Traditional enterprise workloads move data in familiar patterns. A user makes a request, a server responds, and the conversation ends. This north-south traffic, client to server, is what most enterprise networks were designed to handle.

AI training disrupts that model. Training jobs generate steady, high-volume data streams between GPUs. This east-west traffic, where thousands of GPUs swap information in lockstep, requires a completely different network design.

These workloads are also far more sensitive to network hiccups. Research from APNIC shows that a packet loss rate of just 0.1% during large-scale training can reduce GPU usage by over 13%. At 1% loss, GPUs spend less than 5% of their time doing real work. The rest is wasted on retries and re-syncing.

Put differently: you can have the most powerful GPU cluster money can buy, and a minor network issue can turn it into the world’s most expensive space heater.

Inference is shifting the pressure to the edge

The industry’s center of gravity is moving from training to inference. Deloitte estimated that inference workloads accounted for half of all AI compute in 2025, a figure projected to reach two-thirds this year. Lenovo’s CEO has forecast that the long-term split will eventually reach 80% for inference and 20% for training.

This shift carries big network implications. Training can run in remote data centers with fast internal fabrics. Inference, on the other hand, is latency-sensitive and spread out. It needs to run close to users, across edge sites, cloud regions, and hybrid setups.

That means the network is no longer just a data center concern. It’s a problem that spans cloud regions, WAN links, peering points, and transit providers. Most enterprises have very little insight into how this traffic flows across the whole path.

The visibility gap

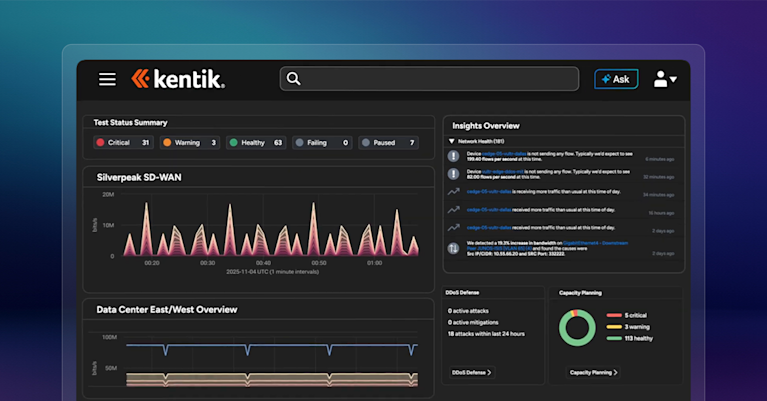

Here’s where the blind spot becomes a business problem. Most organizations monitor their networks for uptime and basic performance. They know when a link goes down. They can see interface utilization on a dashboard.

What they can’t see is how AI inference behaves across their network, and they are completely blind to how it performs out on the internet. They can’t link a spike in training time, frequently referred to as job completion time (JCT), to a buffer congestion event inside their data center fabric. They can’t tell if their inference traffic is taking the best path to end users, or burning money on pricey transit links when a peering option exists. They can’t spot the subtle packet loss that is quietly cutting GPU output by double digits.

This gap between what teams watch and what they need to know is where network intelligence matters. There is a real difference between monitoring (knowing something is happening) and intelligence (knowing why it’s happening and what to do). A dashboard that shows a link at 80% tells you very little. In AI clusters, links run hot all the time. A system that shows you how that traffic is impacting your JCT tells you everything.

Three things every AI-forward organization should do now

Get real visibility into traffic flows. You can’t fix what you can’t see. Know how AI workloads move data across your systems. Learn which paths they take, where congestion builds, and how traffic splits between transit, peering, and cloud links. If your tools only show device health and interface counters, you’re flying blind.

Treat the network as part of the AI stack. AI teams typically own compute, storage, and model development. The network is someone else’s problem, until it isn’t. Building operational bridges between network engineering and AI/ML infrastructure teams ensures that network performance is a first-class consideration in AI deployment planning, not an afterthought discovered during a postmortem.

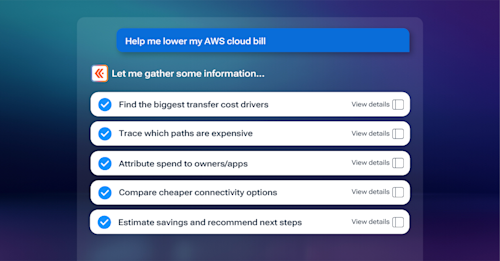

Plan for distributed inference now. As inference scales and spreads across edge sites, cloud regions, and hybrid setups, network complexity will grow. Teams that invest in traffic analytics, smarter peering and transit strategy, and broad visibility across their footprint will run inference at lower cost and higher quality. Those who don’t will pay for it in latency, cloud egress bills, and poor user experiences.

The bottom line

The AI strategies that succeed over the next few years won’t be the ones with the most GPUs or the biggest models. They’ll be built on infrastructure capable of supporting the demands of AI workloads.

The network is the connective tissue of every AI system. It’s time to stop treating it as plumbing and start treating it as a strategic asset.