Kentik CEO Avi Freedman with PacketPushers on NPM & DDoS

Summary

Avi Freedman recently spoke with Ethan Banks and Greg Ferro of PacketPushers about Kentik’s latest updates, which focus primarily on features that enhance network performance monitoring and DDoS protection. This post includes excerpts from that conversation as well as a link to the full podcast. Avi discusses his vision of appliance-free network monitoring, explains how host monitoring expands Kentik’s functionality, and gives an overview of how we detect and respond to anomalies and attacks.

Kentik CEO Avi Freedman in Conversation with PacketPushers

I recently had the chance to talk with fellow nerds Ethan Banks and Greg Ferro from PacketPushers about Kentik’s latest updates in the arena of network performance monitoring and DDoS protection. I thought I’d share some excerpts from that conversation, which have been edited for brevity and clarity. If you’d like you can also listen to the full podcast.

Destroying Silos and Ripping Out Appliances

Startup life is hectic, and that is by design. We thought when we started Kentik that there was an opportunity to rip the monitoring appliances out of the infrastructure, and in startup life it’s just a question of how fast you want to go. And we’ve decided that we want to try going pretty fast. That involves often doubling the company size every year, adding new kinds of customers, and, as a CEO, a whole new area of geekdom about business and marketing. And in some ways figuring out how to explain the technology to people is a harder problem than building it. Especially explaining to people who don’t know what BGP, AS paths, and communities are. They just want to know: is it the goddamn network that’s causing my goddamn problem?

Part of our secret long-term plan is to address how you combine all of this technology. You’ve got APM, and metrics, and Net NPM, and DDoS protection, and it all needs to work, and it all needs to be related. But again, back to the startup world, one thing at a time. First, badass NetFlow tool, then bring the performance side into it, then protect the infrastructure, and then we’ll see where we go from there. These silos really hurt people, and the lack of visibility is very difficult for operations.

Our Cloud-Friendly Network Performance Monitoring

The Kentik base is the Kentik Detect offering that we’ve had out commercially for about a year and a half. If you’re a super network nerd, we just tell you that it’s a badass NetFlow tool. It lets you see anything that you want to know, and alert you to anomalous conditions, but in a way that lets you dig down because we keep all the details, and we present those details in a way that network and operations folks understand. So for the base product it’s really more about “where’s the traffic going?” than “what’s the performance of the network?”

People used to say that measuring performance means SNMP. Before you retch, let’s say that means measuring congestion. If the link is congested, then a basic NetFlow tool function allows you to double click on that, and you can see that the link is full because there’s tons of traffic due to the database servers syncing.

NPM is about leveraging data sources that are not just standard NetFlow, sFlow, or IPFIX.

What that doesn’t really tell you is whether there’s a performance problem. The link may be almost full, but there may be no TCP retransmits, and the latency may be good. So really getting into network performance monitoring is about how we can get data sources that are not just standard NetFlow, sFlow, or IPFIX, but come from packets or web logs, or devices that can send that kind of augmented flow data, which has standard NetFlow but also latency information and retransmit information. And then to make it usable, so you can say “It isn’t the network,” or “Aha! It is the network, in fact it’s that remote network or it’s my data center,” or wherever it is.

If you’re 100% in the cloud, generally what you would do is use an agent that can run on the server and watch the packets. We actually work with nTop, who’s a granddaddy in the space, and they have something called nProbe, which can watch packets generate flow but also add performance and metadata. As you deploy that, you have to be careful that you don’t have the Heisenbugs, where your monitoring interferes with production and then things happen strangely. So you have to watch, sometimes a percentage of the traffic.

The agent sends data in IPFIX, so standard format, but also adds in the performance data that we need — and soon application semantics and some other things — and it looks like just a network device. So every host looks like a network device, we’re getting Flow from it, and we’ll typically in Cloud then use a BGP table that’s representative of that kind of infrastructure. Because you probably don’t speak BGP with your Cloud provider. Unless you’re doing anycast, which some people do, but not AWS, GCE, Azure, Soft Layer, not the big guys. So the data’s coming from the servers. If you run hybrid Cloud, and you’re running at least some of your own infrastructure, then again switches, routers, BGP tables, but to get that sort of augmented information, it can come from the host agent.

Our new host agent gives you metrics like retransmits, out-of-order, and latency.

The goal of our first NPM release is to really get at whether there a performance issue, and if so is it the network or back in the application? We’re not pointing down deep into the application because we’re not really taking the full application logs. But if we have the augmented data, it opens up a whole number of new metrics for Kentik Detect. So instead of just bits per second, or packets per second, or number of unique IPs, now you get retransmits, out-of-order packets, client latency, server latency, and application latency.

For NPM the emphasis is really on those last three, because we have anomaly detection and alerting that will tell you, for example, that the observed client latency is now 10x, and show you that the network latency is fine and the application latency is high. Those are the things that the people with NPM are looking to do. What do you do once you know that? That’s the alerting part of it, which we’ll talk about in a little bit when we talk about DDoS and our new alerting system. But really alerting should be not just attacks but also performance and other application issues in our opinion.

We’ve got the BGP data, so we lay it out, and we show you on a path basis where we think the problem is. So that if you see a problem, and you see that it looks like it’s a network, you can actually see, oh, is it the data center, is it my immediate upstream provider, or is it off in the middle?

Our customers actually send our reports to pinpoint problems for upstream neighbors.

We’ve got an advertising technology customer, and ad tech is a really interesting space for network sophistication. Or at least, I’ll say for network frustration when they don’t have the data. You’ve got all these companies that compete with each other, but they need to cooperate to give 50-millisecond SLAs, because if they don’t then the web pages don’t load. When people load a web page and see ad-tech stuff blocking it, they get upset. So where’s the problem? Often our customers actually have to send reports from our system to show their upstream neighbors where that problem is. That may sound like finger pointing, but what we’ve seen is that it’s actually taken very constructively compared to, I don’t know, last decade, when I just saw endless organizations that didn’t want to admit problems. Now people understand that there are problems, and they’re really just looking to collaborate. And with tools that are siloed, you can’t really do that.

So for us NPM is about application versus network performance problems, and identifying where in the network, or where in the application, the problem might be.

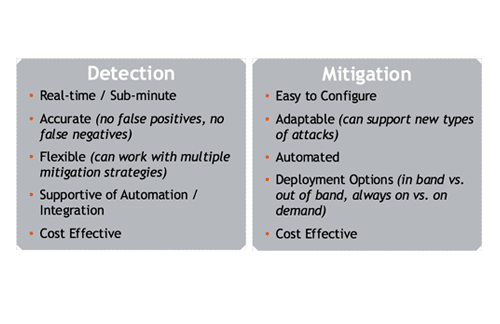

On DDoS Protection

It’s not possible to prevent being attacked. And I think that there are attacks that could be generated but we have not yet seen that very few people other than maybe Google or Akamai would be able to stop. But for the 99% of attacks that actually happen on the Internet, it’s a tractable problem. You can detect them, you can mitigate them. It might not be as cheap as you would like, but it is a solvable problem. Absolutely, it is. Even at the terabit level.

We’re taking the traffic summary data, whether it’s sFlow, NetFlow, IPFIX, again from hosts, routers, load balancers, live. We have a continually running streaming data system that is looking at what the usual traffic is, and most of our customers are looking really at anomalies with minimum thresholds. So I don’t want to know that 3 kilobits went to 3 megabits, that’s not really interesting. I want to look at things that are in the tens of megabits going up much higher.

You can alert if your traffic volume is way above baseline or fits a known DDoS pattern.

So you’ve got baselines built in our system and you’re looking at traffic, and you say, oh, that’s a problem. And then we can look back and see in more detail what has the traffic volume been, and if it’s way above normal, especially for a known kind of DDoS pattern, then we can have that trigger an alert. What people want to do in response to the notification, that’s a business question for them. We have people that automatically trigger mitigation. We have people that want the big, red button, so it opens a ticket, and they log in and push that button to do something about it. And we have people that just want to be notified, and then examine on their own.

Over the last year, really since late 2015, we’ve been dealing with larger and larger customers that say, “We need a protection solution that has open, big-data, real-time visibility and detection, but then ties to box and appliance and Cloud-provider mitigation.” And the two providers for which we’ve heard the most requests for that kind of integration are Radware and A10. So those are the mitigations that we’ve built. In terms of a complete mitigation solution, you can detect, you can do RTBH, you can use that to drive Flow Spec, and we also have running in production an end-to-end integration so that we can drive Radware and A10 appliance-based solutions, and then we’ll have some Cloud-based solutions as well that we’ll be integrating with, which a lot of our customers use.